A information for CTOs and DevSecOps engineers on hardening native AI deployments. Simply because it is native does not imply it is safe.

Key Sections:

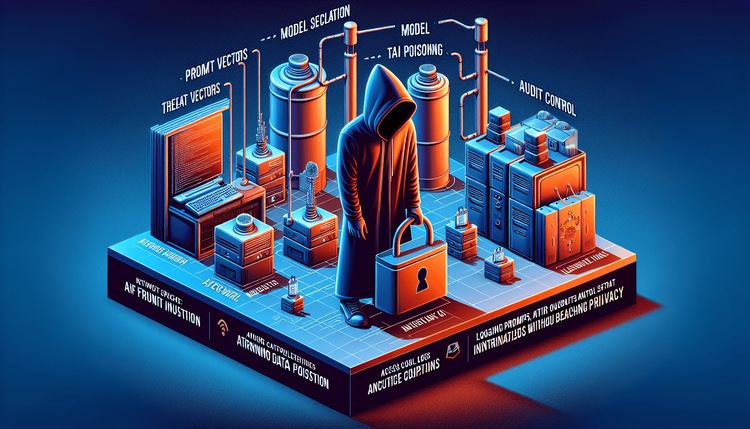

1. **Risk Vectors:** Immediate injection, mannequin theft, coaching information poisoning.

2. **Community Safety:** Air-gapping necessities, mTLS for inference utilization.

3. **Entry Management:** Implementing API keys and utilization quotas for inner LLM APIs.

4. **Audit Logs:** Logging prompts and completions (with out violating privateness insurance policies).

5. **Sanitization:** Enter/Output guardrails utilizing instruments like Guardrails AI.

**Inside Linking Technique:** Hyperlink to Pillar. Hyperlink to ‘Deploying to Kubernetes’.

Proceed studying

Enterprise Native AI: A Safety & Compliance Guidelines

on SitePoint.