Vector databases are generally used to retailer vector embeddings for duties like similarity search to construct advice and question-answering techniques. Milvus is likely one of the open-source databases that shops embeddings within the type of vector information, it’s properly suited as a result of it has indexing options like Approximate Nearest Neighbours (ANN) enabling quick and correct outcomes.

On this article, we’ll show the steps of the best way to use a HuggingFace dataset, create embeddings from the dataset, and divide the dataset into two halves (testing and coaching). You’ll additionally learn to retailer all of the created embeddings into the deployed Milvus database by creating a group, then carry out a search operation by giving a query immediate and producing essentially the most related solutions.

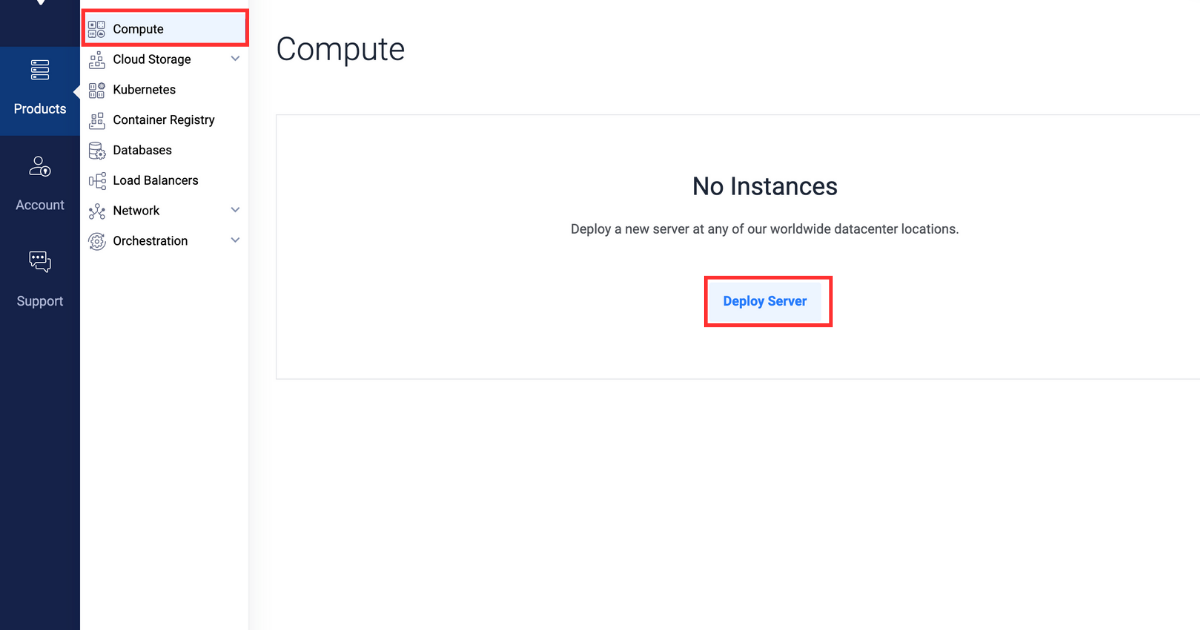

Deploying a server on Vultr

- Join and log in to the Vultr Buyer Portal.

- Navigate to the Merchandise web page.

- From the aspect menu, choose Compute.

- Click on the Deploy Server button within the heart.

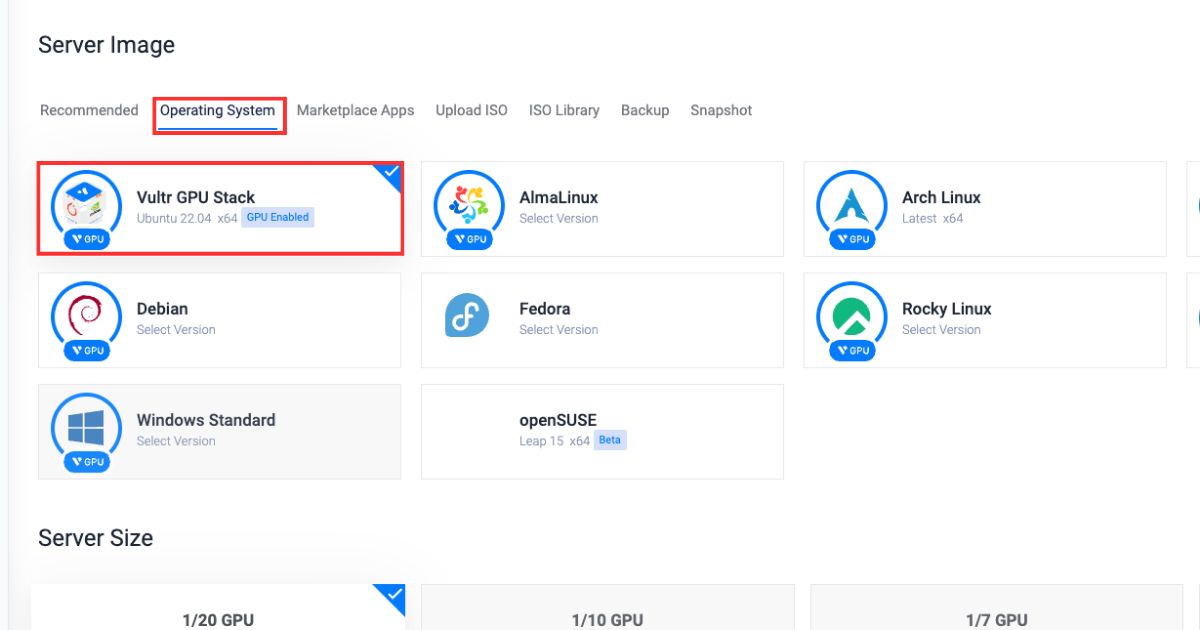

- Choose Cloud GPU because the server sort.

- Choose A100 because the GPU sort.

- Within the “Server Location” part, choose the area of your alternative.

- Within the “Working System” part, choose Vultr GPU Stack because the working system.

Vultr GPU Stack is designed to streamline the method of constructing Synthetic Intelligence (AI) and Machine Studying (ML) initiatives by offering a complete suite of pre-installed software program, together with NVIDIA CUDA Toolkit, NVIDIA cuDNN, TensorFlow, PyTorch and so forth.

Vultr GPU Stack is designed to streamline the method of constructing Synthetic Intelligence (AI) and Machine Studying (ML) initiatives by offering a complete suite of pre-installed software program, together with NVIDIA CUDA Toolkit, NVIDIA cuDNN, TensorFlow, PyTorch and so forth. - Within the “Server Dimension” part, choose the 80 GB choice.

- Choose any extra options as required within the “Further Options” part.

- Click on the Deploy Now button on the underside proper nook.

- Navigate to the Merchandise web page.

- From the aspect menu, choose Kubernetes.

- Click on the Add Cluster button within the heart.

- Kind in a Cluster Identify.

- Within the “Cluster Location” part, choose the area of your alternative.

- Kind in a Label for the cluster pool.

- Improve the Variety of Nodes to five.

- Click on the Deploy Now button on the underside proper nook.

Getting ready the server

Putting in the required packages

After organising a Vultr server and a Vultr Kubernetes cluster as described earlier, this part will information you thru putting in the dependency Python packages obligatory for making a Milvus database and importing the mandatory modules within the Python console.

- Set up required dependencies

pip set up transformers datasets pymilvus torchRight here’s what every package deal represents:

transformers: Supplies entry and permits to work with pre-trained LLM fashions for duties like textual content classification and era.datasets: Supplies entry and permits to work on ready-to-use datasets for NLP duties.pymilvus: Python consumer for Milvus that enables vector similarity search, storage, and administration of huge collections of vectors.torch: Machine studying library used for coaching and constructing deep studying fashions.

- Entry the python console

python3 - Import required modules

from pymilvus import connections, FieldSchema, CollectionSchema, DataType, Assortment, utility from datasets import load_dataset_builder, load_dataset, Dataset from transformers import AutoTokenizer, AutoModel from torch import clamp, sumRight here’s what every package deal represents:

pymilvusmodules:connections: Supplies capabilities for managing connections with the Milvus database.FieldSchema: Defines the schema of fields in a Milvus database.CollectionSchema: Defines the schema of the gathering.DataType: Enumerates information sorts that can be utilized in Milvus assortment.Assortment: Supplies the performance to work together with Milvus collections to create, insert, and seek for vectors.utility: Supplies the info preprocessing and question optimization capabilities to work with Milvus

datasetsmodules:load_dataset_builder: Masses and returns dataset object to entry the database data and its metadata.load_dataset: Masses a dataset from a dataset builder and returns the dataset object for information entry.Dataset: Represents a dataset, offering entry to data-related operations.

transformersmodules:AutoTokenizer: Masses the pre-trained tokenization fashions for NLP duties.AutoModel: It’s a mannequin loading class for mechanically loading the pre-trained fashions for NLP duties.

torchmodules:clamp: Supplies capabilities for element-wise limiting of tensor values.sum: Computes the sum of tensor components alongside specified dimensions.

Constructing a question-answering structure

On this part, you’ll learn to create a group, insert information into the gathering, and carry out search operations by offering an enter in question-answer format.

- Declare parameters, be certain that to switch the

EXTERNAL_IP_ADDRESSwith precise worth.DATASET = 'squad' MODEL = 'bert-base-uncased' TOKENIZATION_BATCH_SIZE = 1000 INFERENCE_BATCH_SIZE = 64 INSERT_RATIO = .001 COLLECTION_NAME = 'huggingface_db' DIMENSION = 768 LIMIT = 10 MILVUS_HOST = "EXTERNAL_IP_ADDRESS" MILVUS_PORT = "19530"Right here’s what every parameter represents:

DATASET: Defines the Huggingface dataset to make use of for looking out solutions.MODEL: Defines the transformer to make use of for creating embeddings.TOKENIZATION_BATCH_SIZE: Determines what number of texts are processed without delay throughout tokenization, and helps in dashing up tokenization by utilizing parallelism.INFERENCE_BATCH_SIZE: Units the batch dimension for predictions, affecting the effectivity of textual content classification duties. You may scale back the batch dimension to 32 or 18 when utilizing a smaller GPU dimension.INSERT_RATIO: Controls the a part of textual content information to be transformed into embeddings managing the amount of knowledge to be listed for performing vector search.COLLECTION_NAME: Units the title of the gathering you will create.DIMENSION: Units the scale of a person embedding you will retailer within the assortment.LIMIT: Units the variety of outcomes to seek for and to be displayed within the output.MILVUS_HOST: Units the exterior IP to entry the deployed Milvus database.MILVUS_PORT: Units the port the place the deployed Milvus database is uncovered.

- Hook up with the exterior Milvus database you deployed utilizing the exterior IP tackle and port on which Milvus is uncovered. Be sure to switch the

personandpasswordarea values with acceptable values.In case you are accessing the database for the primary time then theperson= root andpassword= Milvus.connections.join(host="MILVUS_HOST", port="MILVUS_PORT", person="USER", password="PASSWORD")

Creating a group

On this part, you’ll learn to create a group and outline its schema to retailer the content material from the dataset appropriately. You’ll additionally learn to create indexes and cargo the gathering.

- Examine assortment existence, if the gathering is current then it’s deleted to keep away from any conflicts.

if utility.has_collection(COLLECTION_NAME): utility.drop_collection(COLLECTION_NAME) - Create a group named

huggingface_dband outline the gathering schema.fields = [ FieldSchema(name='id', dtype=DataType.INT64, is_primary=True, auto_id=True), FieldSchema(name='original_question', dtype=DataType.VARCHAR, max_length=1000), FieldSchema(name='answer', dtype=DataType.VARCHAR, max_length=1000), FieldSchema(name='original_question_embedding', dtype=DataType.FLOAT_VECTOR, dim=DIMENSION) ] schema = CollectionSchema(fields=fields) assortment = Assortment(title=COLLECTION_NAME, schema=schema)The next are the fields used to outline the schema of the gathering:

id: Major area from which all of the database entries are to be recognized.original_question: It’s the area the place the unique query is saved from which the query you requested goes to be matched.reply: It’s the area holding the reply to everyoriginal_quesition.original_question_embedding: Accommodates the embeddings for every entry inoriginal_questionto carry out similarity search with the query you gave as enter.

- Create an index for the

original_question_embeddingarea to carry out similarity search.index_params = { 'metric_type':'L2', 'index_type':"IVF_FLAT", 'params':{"nlist":1536} }assortment.create_index(field_name="original_question_embedding", index_params=index_params)Upon the profitable index creation of the required area, the beneath output will probably be displayed:

Standing(code=0, message=) - Load the gathering to make sure that the gathering is ready to carry out search operation.

assortment.load()

Inserting information within the assortment

On this part, you’ll learn to break up the dataset into units, tokenize all of the questions within the dataset, create embeddings, and insert them into the gathering.

- Load the dataset, break up the dataset into coaching and take a look at units, and course of the take a look at set to take away every other columns aside from the reply textual content.

data_dataset = load_dataset(DATASET, break up='all') data_dataset = data_dataset.train_test_split(test_size=INSERT_RATIO, seed=42)['test'] data_dataset = data_dataset.map(lambda val: {'reply': val['answers']['text'][0]}, remove_columns=['answers']) - Initialize the tokenizer.

tokenizer = AutoTokenizer.from_pretrained(MODEL) - Outline the perform to tokenize the questions.

def tokenize_question(batch): outcomes = tokenizer(batch['question'], add_special_tokens = True, truncation = True, padding = "max_length", return_attention_mask = True, return_tensors = "pt") batch['input_ids'] = outcomes['input_ids'] batch['token_type_ids'] = outcomes['token_type_ids'] batch['attention_mask'] = outcomes['attention_mask'] return batch - Tokenize every query entry utilizing the

tokenize_questionperform outlined earlier and set the output totorchsuitable format for PyTorch-based Machine Studying fashions.data_dataset = data_dataset.map(tokenize_question, batch_size=TOKENIZATION_BATCH_SIZE, batched=True) data_dataset.set_format('torch', columns=['input_ids', 'token_type_ids', 'attention_mask'], output_all_columns=True) - Load the pre-trained mannequin, move the tokenized questions, generate the embeddings from the questions, and insert them into the dataset as

question_embeddings.mannequin = AutoModel.from_pretrained(MODEL)def embed(batch): sentence_embs = mannequin( input_ids=batch['input_ids'], token_type_ids=batch['token_type_ids'], attention_mask=batch['attention_mask'] )[0] input_mask_expanded = batch['attention_mask'].unsqueeze(-1).increase(sentence_embs.dimension()).float() batch['question_embedding'] = sum(sentence_embs * input_mask_expanded, 1) / clamp(input_mask_expanded.sum(1), min=1e-9) return batchdata_dataset = data_dataset.map(embed, remove_columns=['input_ids', 'token_type_ids', 'attention_mask'], batched = True, batch_size=INFERENCE_BATCH_SIZE) - Insert questions into the gathering.

def insert_function(batch): insertable = [ batch['question'], [x[:995] + '...' if len(x) > 999 else x for x in batch['answer']], batch['question_embedding'].tolist() ] assortment.insert(insertable)data_dataset.map(insert_function, batched=True, batch_size=64) assortment.flush()The output will appear like this:

Dataset({ options: ['id', 'title', 'context', 'question', 'answer', 'input_ids', 'token_type_ids', 'attention_mask', 'question_embedding'], num_rows: 99 })

Producing responses

On this part, you’ll learn to present a immediate, tokenize and embed the immediate to carry out similarity search, and generate essentially the most related responses.

- Create a immediate dataset, you may change the query with any customized immediate and you too can the variety of questions per immediate.

questions = {'query':['When was maths invented?']} question_dataset = Dataset.from_dict(questions) - Tokenize and embed the immediate.

question_dataset = question_dataset.map(tokenize_question, batched = True, batch_size=TOKENIZATION_BATCH_SIZE)question_dataset.set_format('torch', columns=['input_ids', 'token_type_ids', 'attention_mask'], output_all_columns=True)question_dataset = question_dataset.map(embed, remove_columns=['input_ids', 'token_type_ids', 'attention_mask'], batched = True, batch_size=INFERENCE_BATCH_SIZE) - Outline the

searchperform that performs search operations utilizing the embeddings created earlier. The retrieved data is organized into lists and returned as a dictionary.def search(batch): res = assortment.search(batch['question_embedding'].tolist(), anns_field='original_question_embedding', param = {}, output_fields=['answer', 'original_question'], restrict = LIMIT) overall_id = [] overall_distance = [] overall_answer = [] overall_original_question = [] for hits in res: ids = [] distance = [] reply = [] original_question = [] for hit in hits: ids.append(hit.id) distance.append(hit.distance) reply.append(hit.entity.get('reply')) original_question.append(hit.entity.get('original_question')) overall_id.append(ids) overall_distance.append(distance) overall_answer.append(reply) overall_original_question.append(original_question) return { 'id': overall_id, 'distance': overall_distance, 'reply': overall_answer, 'original_question': overall_original_question } - Carry out the search operation by making use of the sooner outlined

searchperform within thequestion_dataset.question_dataset = question_dataset.map(search, batched=True, batch_size = 1) for x in question_dataset: print() print('Query:') print(x['question']) print('Reply, Distance, Unique Query') for x in zip(x['answer'], x['distance'], x['original_question']): print(x)The output will appear like this:

Query: When was maths invented? Reply, Distance, Unique Query ('till 1870', tensor(33.3018), 'When did the Papal States exist?') ('October 1992', tensor(34.8276), 'When have been free elections held?') ('1787', tensor(36.0596), 'When was the Tower constructed?') ('Poland, Bulgaria, the Czech Republic, Slovakia, Hungary, Albania, former East Germany and Cuba', tensor(38.3254), 'The place was Russian education necessary within the twentieth century?') ('6,000 years', tensor(41.9444), 'How outdated did biblical students suppose the Earth was?') ('1992', tensor(42.2079), 'In what yr was the Premier League created?') ('1981', tensor(44.7781), "When was ZE's Mutant Disco launched?") ('Medieval Latin', tensor(46.9699), "What was the Latin of Charlemagne's period later referred to as?") ('taxation', tensor(49.2372), 'How did Hobson argue to rid the world of imperialism?') ('gentle weight, relative unbreakability and low floor noise', tensor(49.5037), "What have been benefits of vinyl within the 1930's?")Within the above output, the closest 10 solutions are printed in a descending order for the query you requested together with the unique questions these solutions belong to, the output additionally reveals tensor values with every reply, much less tensor worth implies that the reply is extra correct for the query you requested.

Conclusion

On this article, you realized the best way to construct a question-answering system utilizing a HuggingFace dataset and Milvus database. The tutorial guided you thru the steps to create embeddings from a dataset, retailer them into a group, after which carry out similarity search to search out the perfect appropriate solutions for the immediate by creating the embedding of the query supplied and calculating the tensors.

This can be a sponsored article by Vultr. Vultr is the world’s largest privately-held cloud computing platform. A favourite with builders, Vultr has served over 1.5 million clients throughout 185 international locations with versatile, scalable, world Cloud Compute, Cloud GPU, Naked Steel, and Cloud Storage options. Be taught extra about Vultr.