Editor’s be aware: This put up is a part of the AI Decoded collection, which demystifies AI by making the know-how extra accessible and showcases new {hardware}, software program, instruments and accelerations for NVIDIA RTX PC and workstation customers.

The demand for instruments to simplify and optimize generative AI improvement is skyrocketing. Purposes based mostly on retrieval-augmented technology (RAG) — a method for enhancing the accuracy and reliability of generative AI fashions with information fetched from specified exterior sources — and customised fashions are enabling builders to tune AI fashions to their particular wants.

Whereas such work might have required a fancy setup prior to now, new instruments are making it simpler than ever.

NVIDIA AI Workbench simplifies AI developer workflows by serving to customers construct their very own RAG initiatives, customise fashions and extra. It’s a part of the RTX AI Toolkit — a set of instruments and software program improvement kits for customizing, optimizing and deploying AI capabilities — launched at COMPUTEX earlier this month. AI Workbench removes the complexity of technical duties that may derail specialists and halt freshmen.

What Is NVIDIA AI Workbench?

Obtainable without spending a dime, NVIDIA AI Workbench permits customers to develop, experiment with, check and prototype AI functions throughout GPU methods of their selection — from laptops and workstations to information heart and cloud. It presents a brand new strategy for creating, utilizing and sharing GPU-enabled improvement environments throughout folks and methods.

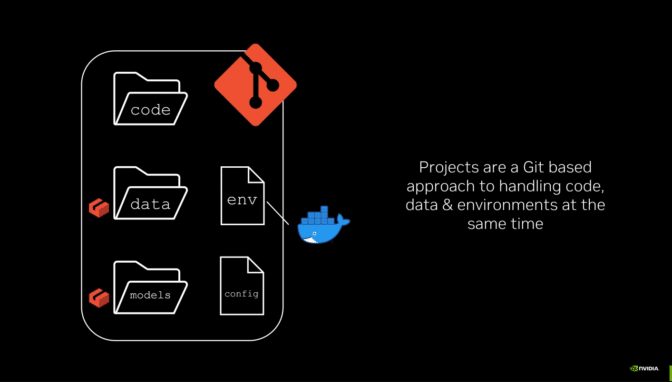

A easy set up will get customers up and operating with AI Workbench on an area or distant machine in simply minutes. Customers can then begin a brand new undertaking or replicate one from the examples on GitHub. Every part works via GitHub or GitLab, so customers can simply collaborate and distribute work. Be taught extra about getting began with AI Workbench.

How AI Workbench Helps Handle AI Mission Challenges

Growing AI workloads can require handbook, typically advanced processes, proper from the beginning.

Establishing GPUs, updating drivers and managing versioning incompatibilities might be cumbersome. Reproducing initiatives throughout totally different methods can require replicating handbook processes again and again. Inconsistencies when replicating initiatives, like points with information fragmentation and model management, can hinder collaboration. Various setup processes, transferring credentials and secrets and techniques, and adjustments within the atmosphere, information, fashions and file places can all restrict the portability of initiatives.

AI Workbench makes it simpler for information scientists and builders to handle their work and collaborate throughout heterogeneous platforms. It integrates and automates varied facets of the event course of, providing:

- Ease of setup: AI Workbench streamlines the method of organising a developer atmosphere that’s GPU-accelerated, even for customers with restricted technical data.

- Seamless collaboration: AI Workbench integrates with version-control and project-management instruments like GitHub and GitLab, decreasing friction when collaborating.

- Consistency when scaling from native to cloud: AI Workbench ensures consistency throughout a number of environments, supporting scaling up or down from native workstations or PCs to information facilities or the cloud.

RAG for Paperwork, Simpler Than Ever

NVIDIA presents pattern improvement Workbench Initiatives to assist customers get began with AI Workbench. The hybrid RAG Workbench Mission is one instance: It runs a customized, text-based RAG net utility with a consumer’s paperwork on their native workstation, PC or distant system.

Each Workbench Mission runs in a “container” — software program that features all the mandatory elements to run the AI utility. The hybrid RAG pattern pairs a Gradio chat interface frontend on the host machine with a containerized RAG server — the backend that companies a consumer’s request and routes queries to and from the vector database and the chosen massive language mannequin.

This Workbench Mission helps all kinds of LLMs out there on NVIDIA’s GitHub web page. Plus, the hybrid nature of the undertaking lets customers choose the place to run inference.

Builders can run the embedding mannequin on the host machine and run inference domestically on a Hugging Face Textual content Era Inference server, on the right track cloud sources utilizing NVIDIA inference endpoints just like the NVIDIA API catalog, or with self-hosting microservices similar to NVIDIA NIM or third-party companies.

The hybrid RAG Workbench Mission additionally consists of:

- Efficiency metrics: Customers can consider how RAG- and non-RAG-based consumer queries carry out throughout every inference mode. Tracked metrics embrace Retrieval Time, Time to First Token (TTFT) and Token Velocity.

- Retrieval transparency: A panel reveals the precise snippets of textual content — retrieved from essentially the most contextually related content material within the vector database — which are being fed into the LLM and bettering the response’s relevance to a consumer’s question.

- Response customization: Responses might be tweaked with quite a lot of parameters, similar to most tokens to generate, temperature and frequency penalty.

To get began with this undertaking, merely set up AI Workbench on an area system. The hybrid RAG Workbench Mission might be introduced from GitHub into the consumer’s account and duplicated to the native system.

Extra sources can be found within the AI Decoded consumer information. As well as, neighborhood members present useful video tutorials, just like the one from Joe Freeman beneath.

Customise, Optimize, Deploy

Builders typically search to customise AI fashions for particular use circumstances. Superb-tuning, a method that adjustments the mannequin by coaching it with extra information, might be helpful for model switch or altering mannequin habits. AI Workbench helps with fine-tuning, as nicely.

The Llama-factory AI Workbench Mission permits QLoRa, a fine-tuning methodology that minimizes reminiscence necessities, for quite a lot of fashions, in addition to mannequin quantization by way of a easy graphical consumer interface. Builders can use public or their very own datasets to satisfy the wants of their functions.

As soon as fine-tuning is full, the mannequin might be quantized for improved efficiency and a smaller reminiscence footprint, then deployed to native Home windows functions for native inference or to NVIDIA NIM for cloud inference. Discover a full tutorial for this undertaking on the NVIDIA RTX AI Toolkit repository.

Actually Hybrid — Run AI Workloads Anyplace

The Hybrid-RAG Workbench Mission described above is hybrid in a couple of method. Along with providing a selection of inference mode, the undertaking might be run domestically on NVIDIA RTX workstations and GeForce RTX PCs, or scaled as much as distant cloud servers and information facilities.

The power to run initiatives on methods of the consumer’s selection — with out the overhead of organising the infrastructure — extends to all Workbench Initiatives. Discover extra examples and directions for fine-tuning and customization within the AI Workbench quick-start information.

Generative AI is remodeling gaming, videoconferencing and interactive experiences of all types. Make sense of what’s new and what’s subsequent by subscribing to the AI Decoded e-newsletter.