Introduction

With the discharge of GPT-4o, this mannequin is getting large consideration for its multimodal capabilities. GPT-4o is thought for its superior language processing expertise and has been enhanced to interpret and generate visible content material. Nevertheless, we shouldn’t overlook Gemini, a mannequin that has been extremely praised for its multimodal skills lengthy earlier than GPT-4o arrived. Gemini excels at combining picture recognition with robust language understanding, making it a worthy competitor to GPT-4o. On this article, we’ll examine GPT-4o vs Gemini by analyzing how nicely they carry out in numerous duties. By taking a look at their efficiency, we goal to find out which mannequin is superior. This comparability is essential as a result of the power to work with each textual content and pictures is efficacious for a lot of purposes, resembling computerized content material creation and information evaluation.

How GPT-4o and Gemini Stack up In opposition to Every Different?

Let’s discover the GPT-4o vs Gemini stack up towards one another and see which one comes out on high.

Calculate Sum of Numbers

A really fundamental factor for a multimodal LLM can be to appropriately determine the textual content/numbers written in a given picture. So right here we’ve offered a picture with sure textual content and have requested our 2 opponents, GPT-4o and Gemini, to calculate the sum of the given numbers within the picture. Let’s see who’s going to win this spherical.

GPT-4o

Right here you’ll be able to see, GPT-4o offered the right output. Straightforward peasy.

Gemini

Unsure what Gemini understood with the given immediate. Though it was fairly easy, Gemini couldn’t perceive the context.

Consequence

GPT-4o gained!

Code Recreation Offered within the Picture Hooked up in Python

On this spherical, we’ve offered a picture of a tic-tac-toe recreation however we’ve not talked about particularly within the immediate. The duty for our fashions is to first determine the sport after which write a python code to implement it.

GPT-4o

def print_board(board):

for row in board:

print(" | ".be part of(row))

print("-" * 9)

def check_winner(board):

# Test rows

for row in board:

if row[0] == row[1] == row[2] != " ":

return row[0]

# Test columns

for col in vary(3):

if board[0][col] == board[1][col] == board[2][col] != " ":

return board[0][col]

# Test diagonals

if board[0][0] == board[1][1] == board[2][2] != " ":

return board[0][0]

if board[0][2] == board[1][1] == board[2][0] != " ":

return board[0][2]

return None

def is_board_full(board):

for row in board:

if " " in row:

return False

return True

def major():

board = [

[" ", " ", "O"],

["O", "X", " "],

[" ", " ", " "]

]

]

current_player = "X" # X's flip to play

print("Preliminary Board:")

print_board(board)

whereas True:

row = int(enter(f"Participant {current_player}, enter the row (0, 1, 2): "))

col = int(enter(f"Participant {current_player}, enter the column (0, 1, 2): "))

if board[row][col] == " ":

board[row][col] = current_player

else:

print("Cell already taken! Strive once more.")

proceed

print_board(board)

winner = check_winner(board)

if winner:

print(f"Participant {winner} wins!")

break

if is_board_full(board):

print("It is a draw!")

break

current_player = "X" if current_player == "O" else "O"

if __name__ == "__main__":

major()Output

The code offered by GPT-4o gave this above output. Nice job in figuring out the sport appropriately and making a grid as nicely. Though one of many “o” is misplaced within the given output however in any other case we’ve obtained a completely practical tic-tac-toe recreation.

Gemini

def display_board(board):

"""Shows the present board state"""

for row in board:

for cell in row:

print(cell, finish=" ")

print()

def is_valid_move(board, row, col)

"""Checks if a transfer is legitimate (i.e. the cell is empty)"""

return board[row][col] == " "

def make_move(board, participant, row, col):

"""Locations a participant's mark on the board"""

board[row][col] = participant

def is_winner(board, participant):

"""Checks if a participant has gained"""

# Test rows

for row in board:

if all(cell == participant for cell in row):

return True

# Test columns

for col in vary(len(board[0])):

if all(board[row][col] == participant for row in vary(len(board))):

return True

# Test diagonals

if all(board[row][row] == participant for row in vary(len(board))):

return True

if all(board[row][len(board) - row - 1] == participant for row in vary(len(board))):

return True

return False

def is_board_full(board):

"""Checks if the board is full"""

for row in board:

for cell in row:

if cell == " ":

return False

return True

def major():

"""Fundamental recreation loop"""

board = [[" " for _ in range(3)] for _ in vary(3)]

current_player = "X"

whereas True:

display_board(board)

# Get participant transfer

whereas True:

row = int(enter("Participant {} enter row (1-3): ".format(current_player))) - 1

col = int(enter("Participant {} enter column (1-3): ".format(current_player))) - 1

if is_valid_move(board, row, col):

break

else:

print("Invalid transfer. Please attempt once more.")

# Make transfer and test for winner

make_move(board, current_player, row, col)

if is_winner(board, current_player):

display_board(board)

print("Participant {} wins!".format(current_player))

break

# Test for tie

if is_board_full(board):

display_board(board)

print("It is a tie!")

break

# Swap participant

current_player = "O" if current_player == "X" else "X"

if __name__ == "__main__":

major()Output:

Now, once we run the code offered by Gemini, then it recognized the sport clearly however as you’ll be able to see no we didn’t get any grid. This made it tough to play the sport. Beneath you’ll be able to test how the sport appears to be like with out the grid.

Consequence

GPT-4o gained!

Generate Python Code to Recreate Bar Chart utilizing Matplotlib

On this spherical we’ve given a picture of a bar chart to each our fashions. They should analyze the chart and generate the Python code required to recreate it utilizing Matplotlib. Moreover, they need to be certain that the code produces the identical bar chart when run. Let’s see which mannequin wins this spherical.

GPT-4o

import matplotlib.pyplot as plt

import numpy as np

# Knowledge

quarters = ['Q1', 'Q2', 'Q3', 'Q4']

sales_A = [10, 15, 17, 12]

sales_B = [10, 15, 20, 15]

# Plotting

fig, ax = plt.subplots()

bar_width = 0.35

index = np.arange(len(quarters))

bar1 = ax.bar(index, sales_A, bar_width, label="A")

bar2 = ax.bar(index, sales_B, bar_width, backside=sales_A, label="B")

ax.set_xlabel('Quarter')

ax.set_ylabel('Gross sales')

ax.set_title('Gross sales by Product & Quarter')

ax.set_xticks(index)

ax.set_xticklabels(quarters)

ax.legend()

plt.present()Output

Gemini

import matplotlib.pyplot as plt

# Knowledge

merchandise = ["A", "B"]

sales_q1 = [30, 25]

sales_q2 = [40, 35]

sales_q3 = [20, 30]

sales_q4 = [10, 15]

# Create a bar chart

fig, ax = plt.subplots()

width = 0.35 # the width of the bars

index = vary(len(merchandise)) # the x-axis positions for the bars

bar1 = plt.bar(index, sales_q1, width, label="Q1")

bar2 = plt.bar([p + width for p in index], sales_q2, width, label="Q2")

bar3 = plt.bar([p + width * 2 for p in index], sales_q3, width, label="Q3")

bar4 = plt.bar([p + width * 3 for p in index], sales_q4, width, label="This fall")

# Add labels and title

plt.xlabel('Merchandise')

plt.ylabel('Gross sales')

plt.title('Gross sales by Product & Quarter')

# X-axis labels

plt.xticks([p + 1.5 * width for p in index], merchandise)

# Legend

plt.legend()

plt.present()Output

Consequence

GPT-4o gained!

Clarify Code and Present the Output

Right here we’ve given a picture enter to each our fashions and so they have to grasp the code written within the offered screenshot and moreover present the output for a similar. Let’s see how they carry out on this check.

GPT-4o

Offered a really lengthy abstract however right here’s the abstract and output:

Gemini

Obtained the under clarification however no output for the code.

Level goes to GPT-4o for correctly understanding the immediate and offering right output as nicely.

Consequence

GPT-4o gained!

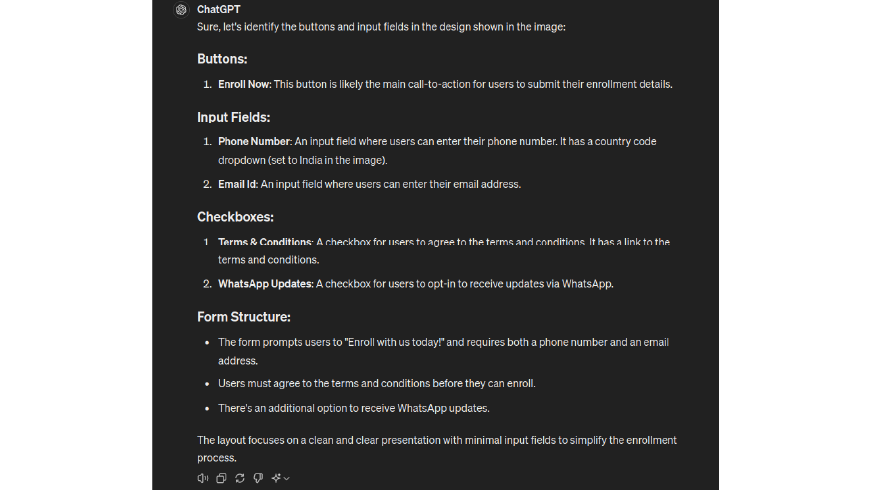

Determine Buttons and Enter Fields within the Given Design

On this immediate the fashions had been requested for an in depth evaluation of a consumer interface (UI) design to find and describe interactive components resembling buttons and enter fields. The purpose is to specify what every ingredient is, its goal, and any related labels or options.

GPT-4o

Spectacular how precisely GPT-4o can determine objects in a design with a transparent understanding of every button, checkbox and textbox.

Gemini

Gemini obtained the enter fields right however there was some uncertainty within the submit button which was sq. in form.

Consequence

GPT-4o gained!

GPT -4o vs Gemini: Last Verdict

| Duties | Winner |

| Calculating the sum of numbers from a picture. | GPT-4o |

| Writing Python code for a tic-tac-toe recreation based mostly on a picture. | GPT-4o |

| Creating Python code to recreate a bar chart from a picture. | GPT-4o |

| Explaining code from a screenshot and offering the output. | GPT-4o |

| Figuring out buttons and enter fields in a consumer interface design. | GPT-4o |

On this head-to-head comparability, GPT-4o clearly outperformed Gemini. GPT-4o persistently offered correct and detailed outcomes throughout all duties, from calculating sums and coding video games to producing bar charts and analyzing UI designs. It confirmed a powerful capability to grasp and course of each textual content and pictures successfully.

Conclusion

Gemini, alternatively, struggled in a number of areas. Whereas it carried out adequately in some duties, it typically failed to offer detailed explanations or correct coding. Its efficiency was inconsistent, highlighting its limitations in comparison with GPT-4o.

General, GPT-4o proved to be the extra dependable and versatile mannequin. Its superior efficiency throughout a number of duties makes it the clear winner on this comparability. In case you want a mannequin that may deal with each textual content and pictures with excessive accuracy, GPT-4o is the higher selection. On this article we explored GPT-4o vs Gemini.