Introduction

One discipline that has seen a growth in current instances is Synthetic Intelligence. By leveraging Synthetic Intelligence tech, machines can accomplish a ton of duties, starting from making use of snap filters to your footage to self-driving automobiles. Two different phrases which can be used interchangeably and regularly with Synthetic Intelligence are Machine Studying and Deep Studying. What are Machine Studying and Deep Studying? Do they imply the identical factor? Or are they utterly completely different from one another? Or are they associated?

This complete, enjoyable learn will restate these questions as we discover Deep Studying vs Machine Studying.

What’s Machine Studying?

Earlier than we deal with the age-old query of Machine Studying vs. Deep Studying, let’s briefly perceive their definitions.

Machine Studying, or ML, is a subfield of Synthetic Intelligence that revolves round creating laptop algorithms by leveraging knowledge. They facilitate machines to make selections or predictions by analyzing and making inferences from knowledge.

Very similar to how people acquire data by understanding inputs, Machine Studying goals to make selections from enter knowledge. The powerhouse behind machine studying is Algorithms.

Completely different Machine Studying Algorithms can be utilized relying on the construction and quantity of information.

What’s Deep Studying?

Deep Studying is a subfield of Machine Studying that leverages neural networks to copy the workings of a human mind on machines. Neurons are configured in neural networks based mostly on coaching from massive quantities of information. Very similar to the algorithms are the powerhouses behind Machine Studying, Deep Studying has Fashions. These fashions take data from a number of knowledge sources and analyze that knowledge in real-time.

A Deep Studying mannequin constitutes nodes that kind layers in neural networks. Info is handed by way of every layer, making an attempt to grasp the data and determine patterns.

A Deep studying mannequin can create less complicated and extra environment friendly classes from difficult-to-understand datasets since it will probably determine each higher-level and lower-level data.

Earlier than they go face to face, allow us to take a look at the most typical Machine Studying algorithms in use.

Most typical Machine Studying Algorithms

Algorithms function a basis in Machine Studying, which Information Scientists and Huge Information Engineers can leverage to categorise, predict, and acquire insights from knowledge.

On this part, we’ll focus on a number of the mostly used Machine Studying Algorithms.

Linear Regression

It’s an algorithm utilized in knowledge science and machine studying that provides a linear relationship between a dependent variable and an impartial variable to foretell the result of future occasions.

Whereas the dependent variable adjustments with fluctuations within the impartial variable, the impartial variable stays unchanged with adjustments in different variables. The mannequin predicts the worth of the dependent variable which is being analyzed.

Linear Regression simulates a mathematical relationship between variables and makes predictions for numeric variables or steady variables, as an example, worth, gross sales, or wage.

Why use a Linear Regression Algorithm?

- It could possibly deal with massive datasets successfully.

- It serves as a superb foundational mannequin for complicated ML algorithm comparisons.

- It’s simple to grasp and implement.

With its ease of use and effectivity, LinearRegression is a elementary machine studying algorithm that one should have of their arsenal.

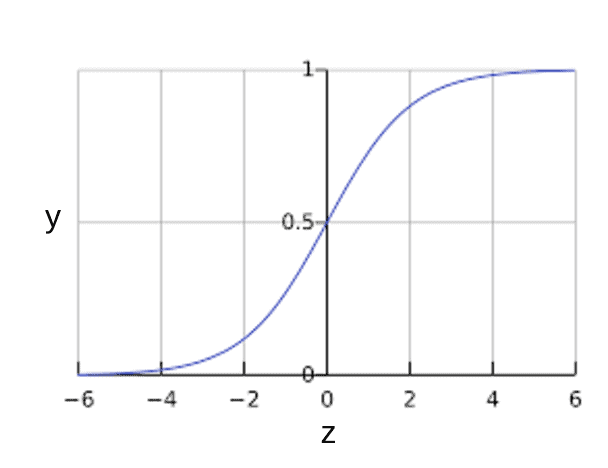

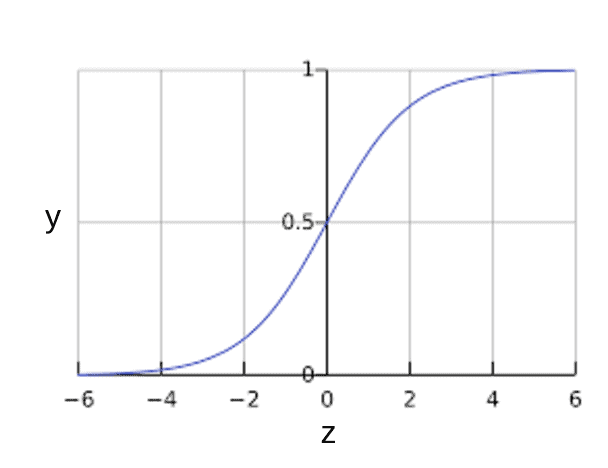

Logistic Regression

What if a dataset has many options? How can we classify them? That is the place Logistic Regression comes into play. Logistic Regression is a type of supervised studying that may predict the chance of sure lessons based mostly on some dependent variables. Merely put, it analyzes the connection between variables. Because it predicts the output of a discrete worth, it provides a probabilistic worth that at all times lies between 0 and 1. Not like linear regression, which is used to unravel regression issues, logistic regression solves classification issues.

Why use a Logistic Regression Algorithm?

- it’s simpler to implement, interpret, and practice when in comparison with different algorithms

- it gives helpful insights by measuring how related the variable is

- Efficient with linear separable datasets

Logistic Regression

Logistic Regression is yet one more generally used Machine Studying Algorithm that leverages chance for outcomes and is a must-know algorithm for Information Scientists and Information Analysts.

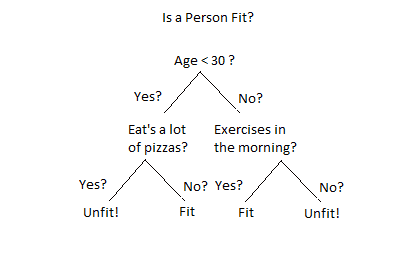

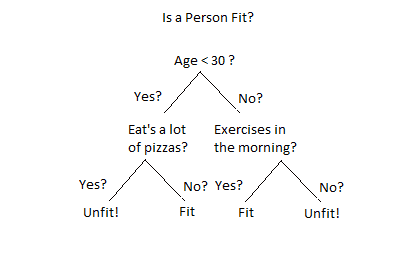

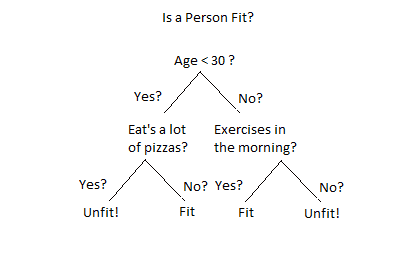

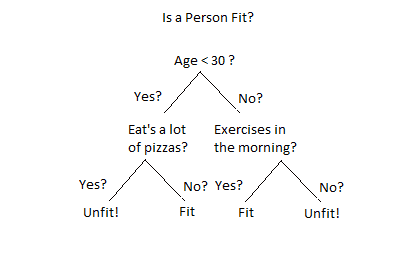

Resolution Tree

That is one other type of supervised studying during which knowledge splits happen based mostly on completely different circumstances. The worth of the basis attribute is in comparison with the attribute of the document within the precise dataset. That is performed within the root node and follows down the department to the following node. The attribute worth of each consecutive node is in contrast with the subnodes till it reaches the ultimate leaf node of the tree.

Not like the previous two, a Resolution Tree is used for each classification and regression duties.

The Resolution Tree constitutes

- Resolution nodes: that is the place the information break up occurs

- Leaves: that is the place the ultimate outcomes occur

Why use a Resolution Tree Algorithm?

- It could possibly deal with multi-output issues.

- It could possibly deal with each categorical and numerical knowledge.

- It gives ease of information pre-processing, making it much less cumbersome to do.

- It doesn’t want knowledge scaling.

- It isn’t affected by lacking values within the knowledge.

Resolution Tree is one other vital machine studying algorithm that’s generally used for classification and regression issues.

Assist Vector Machine

Assist Vector Machines, additionally referred to as SVMs, are supervised Machine Studying algorithms used for regression and classification issues. First launched within the 60s, SVMs are primarily used to unravel classification issues. They’ve gained recognition not too long ago since they’ll deal with steady and categorical variables.

Primarily, they’re used to segregate knowledge factors of various lessons by figuring out a hyperplane. They’re chosen in a fashion to maximise the space between the hyperplane and the closest knowledge factors of every class. These shut knowledge factors are referred to as assist vectors.

Assist Vector Machines are refined ML algorithms that carry out each regression and classification duties and may course of linear and non-linear knowledge by way of kernels.

Dimensionality Discount Algorithms

In Machine Studying, there may be too many variables to work with. This might take the type of regression and classification duties referred to as Options. Dimensionality Discount entails decreasing the variety of options in a dataset. That is performed by remodeling knowledge from high-dimensional characteristic area to low-dimensional characteristic area whereas additionally not shedding significant properties current within the knowledge usually are not misplaced throughout the course of.

Dimensionality Discount trains higher as a result of lesser knowledge and requires much less computational time.

Why use Dimensionality Discount Algorithms?

- It aids in knowledge compression by decreasing options.

- Lesser dimensions imply lesser computing and the algorithms practice sooner.

- It removes pointless, redundant options and noises.

- With fewer knowledge, the mannequin accuracy improves drastically.

Working with a great deal of options could be a daunting activity. However with strategies like Dimensionality discount ML algorithms, we are able to harness the ability of methods to use them and even take away the redundant ones.

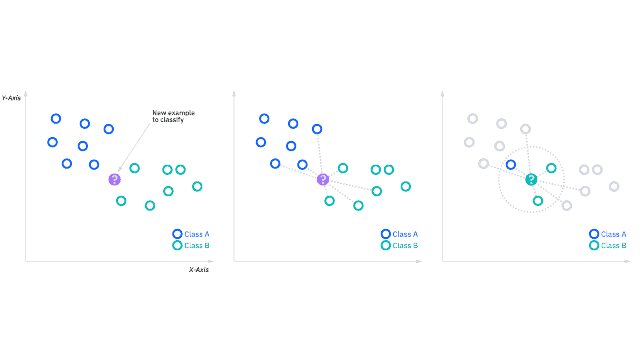

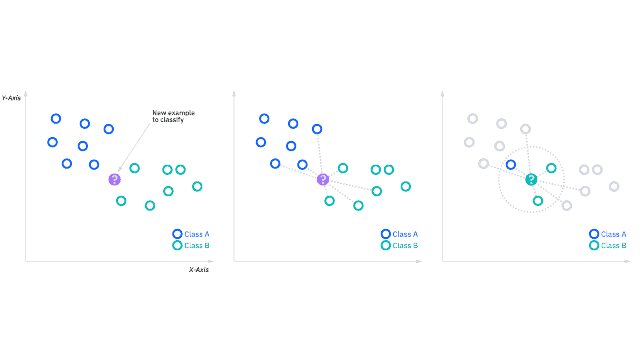

Okay-Nearest Neighbors

The Okay-nearest neighbors algorithm, also called KNN, is a supervised machine studying algorithm used to carry out classification and regression duties utilizing non-parametric ML rules. KNNs are based mostly on the idea of comparable knowledge factors having related labels or values. okay is the variety of labeled factors for classification, the place okay refers back to the variety of labeled factors for figuring out the outcome.

When coaching, this ML algorithm shops the entire coaching dataset as a reference. The space between all of the enter knowledge factors and coaching examples is calculated for making predictions.

The algorithm identifies the kNN based mostly on the space between the okay neighbor and the enter knowledge level. In consequence, the most typical class label of the kNNs is assigned as the expected label for the enter knowledge level for classification duties, and the common worth of the goal values of the kNNs is calculated to find out the enter knowledge level’s worth.

Why use Okay-Nearest Neighbors?

- Its implementation doesn’t require complicated mathematical formulation or optimization strategies.

- Analyzes knowledge with out making any assumptions about their distribution or construction

- Having just one hyperparameter, okay makes tuning simple.

- Coaching is just not required since all of the work is carried out throughout prediction.

kNN is a lazy studying algorithm that makes predictions on the go and never based mostly on the predictions of a studying mannequin.

How Deep Studying Fashions Can Surpass ML?

Deep Studying fashions can outperform Machine Studying algorithms in varied duties as a result of their potential to mannequin complicated patterns and relationships instantly from knowledge relatively than having to engineer options manually. Allow us to discover how Deep studying excels in lots of domains.

Automated Characteristic Extraction

A deep studying algorithm learns to determine options routinely as a substitute of utilizing hand-engineered options, as is the case with conventional machine studying fashions. This functionality is especially helpful in domains like picture recognition and speech recognition, the place this can be very tough to design efficient options manually.

Hierarchical Characteristic Studying

A deep studying mannequin builds a hierarchical illustration of the information by studying a number of layers of illustration. This permits the mannequin to successfully seize each easy and complicated patterns.

Dealing with Excessive-Dimensional Information

Not like conventional machine studying fashions, deep studying fashions thrive on high-dimensional knowledge (like photos, movies, and textual content), the place conventional fashions can wrestle. For instance, a single pixel worth from a picture can include tens of hundreds and even hundreds of thousands of values.

Scalability and Efficiency

Deep studying fashions enhance with elevated knowledge, whereas conventional machine studying algorithms usually plateau and even degrade with elevated knowledge. This scalability is essential in large-scale functions, similar to these encountered in Huge Information contexts.

Flexibility and Adaptability

With solely minor changes to their structure, deep studying fashions may be tailored to a variety of duties. In picture classification, object detection, and even video evaluation, the identical convolutional neural community (CNN) structure is definitely used.

Finish-to-Finish Studying

The advantages of deep studying embody end-to-end studying, which permits a mannequin to be educated instantly from uncooked knowledge to output outcomes, decreasing the necessity for intermediate steps that require area experience. This can be a important benefit in complicated duties the place the relation between enter knowledge and output is unclear.

State-of-the-Artwork Outcomes

Many fields have benefitted from deep studying, surpassing conventional machine studying fashions and even human specialists, similar to taking part in complicated video games like Go and diagnosing medical circumstances based mostly on imaging.

Though deep studying fashions are highly effective, additionally they current some drawbacks, similar to the necessity for big portions of labeled knowledge, their computational complexity, and their lack of transparency (i.e., their lack of information transparency). “black packing containers”). Due to this fact, the selection between deep studying and conventional machine studying is dependent upon the precise necessities and constraints of the duty at hand.

Deep Studying vs Machine Studying: The Showdown

We’ve lastly reached the crux of this learn. We noticed earlier how Deep Studying fashions are higher than Machine Studying algorithms in some elements. On this part, we’ll focus on the differentiating components, examine their efficiency, and in addition study some real-world functions of them.

| Differentiating Components | Machine Studying | Deep Studying |

| Definition | ML is a subfield of AI that makes predictions and selections based mostly on statistical fashions and algorithms by leveraging historic knowledge. | DL is a subfield of ML that tries to copy the workings of a human mind by creating synthetic neural networks that may make clever selections. |

| Structure | ML relies on conventional statistical fashions. | DL makes use of synthetic neural networks with multi-layer nodes. |

| Studying Course of | 1. New data is acquired through a consumer question 2. Analyzes the information 3. Acknowledges a sample 4. Makes Predictions 5. The reply is shipped again to the consumer |

1. Information is acquired 2. Information preprocessing 3. Subsequent is knowledge splitting and balancing 4. Mannequin constructing and coaching 5. Efficiency analysis 6. Hyperparameter coaching 7. Mannequin Deployment |

| Computational & Information Necessities | 1. Information is acquired 2. Information preprocessing 3. Subsequent is knowledge splitting and balancing 4. Mannequin constructing and coaching 5. Efficiency analysis 6. Hyperparameter coaching 7. Mannequin Deployment |

1. DL can work on unstructured knowledge like photos, textual content, or audio. 2. It requires excessive computational energy as a result of its complexity. 3. DL requires massive quantities of information for coaching. |

| Characteristic Engineering | ML requires the engineer to determine the utilized options after which hand-coded based mostly on the area and knowledge kind. | DL reduces the duty of creating new characteristic extractors for every drawback by studying high-level options from knowledge. |

| Varieties | ML may be broadly labeled into 1. Supervised Studying 2. Unsupervised Studying 3. Reinforcement Studying |

DL has these fashions 1. Convolutional Neural Networks 2. Recurrent Neural Networks 3. Multilayer Perceptron |

| Processing Methods | ML makes use of varied strategies like knowledge processing or picture processing. | DL depends on neural networks that represent a number of layers. |

| Downside-solving Method | In ML, the issue is split into sections and solved individually, that are later mixed to get the ultimate outcome. | With DL, we are able to remedy the issue from finish to finish. |

| Drawbacks | Some flaws of ML embody 1. The algorithm improvement requires a excessive stage of technical data and expertise. 2. Few ML algorithms are exhausting to interpret, making too exhausting to grasp how the predictions had been made. 3. ML algorithms require voluminous knowledge for efficient coaching. 4. If educated on biased knowledge, then the fashions may be biased. |

Some flaws of DL embody 1. DL fashions require excessive computational assets like good reminiscence and highly effective GPUs. 2. Because the fashions depend upon the information high quality, the efficiency may be affected negatively if the information is incomplete or noisy. 3. Since DL fashions are educated on massive volumes of information, there’s at all times a excessive likelihood of information privateness and safety considerations. |

| Efficiency Comparability | 1. ML normally performs nicely with easy issues. 2. ML algorithms have improved accuracy as they study from knowledge. 3. They’re extremely scalable and able to dealing with massive datasets. 4. ML doesn’t want as a lot computational energy when in comparison with DL and is simpler to arrange and analyze. 5. ML requires cautious characteristic choice and tuning of parameters for optimum efficiency. 6. ML algorithms require human intervention for characteristic extraction. |

1. DL excels at fixing complicated issues. 2. DL can obtain excessive accuracy ranges with complicated duties like NLP or robotics. 3. DL can have lesser coaching instances with parallel processing {hardware}. 4. Though DL requires excessive compute assets, its efficiency is best with extra knowledge. 5. It requires tuning of many parameters, however as soon as the community structure is ready, it learns by itself from uncooked knowledge. 6. Not like ML, DL fashions don’t require human intervention for characteristic extraction. |

| Profession Alternatives | With ML, one can pursue roles similar to 1. ML engineer 2. Information Scientist 3. Information Analyst 4. Enterprise Intelligence Analyst Requires experience in |

With DL, one can pursue roles similar to 1. DL Engineer 2. AI Analysis Scientist 3. CV Engineer 4. Robotics Engineer 5. Options Architect Requires experience in |

Conclusion

That’s a wrap of this text. We appeared into the definitions of Machine Studying and Deep Studying, ran by way of frequent ML algorithms, and at last checked out Deep Studying vs Machine Studying, a number of the key differentiating components.

Preserve your eyes peeled; extra enjoyable and complete reads are coming your approach on Synthetic Intelligence, Deep Studying, and Laptop Imaginative and prescient.

See you guys within the subsequent one!