Chatbots like ChatGPT, Claude.ai, and phind may be fairly useful, however you won’t at all times need your questions or delicate information dealt with by an exterior software. That is very true on platforms the place your interactions could also be reviewed by people and in any other case used to assist practice future fashions.

One resolution is to obtain a giant language mannequin (LLM) and run it by yourself machine. That means, an out of doors firm by no means has entry to your information. That is additionally a fast choice to attempt some new specialty fashions corresponding to Meta’s not too long ago introduced Code Llama household of fashions, that are tuned for coding, and SeamlessM4T, geared toward text-to-speech and language translations.

Working your personal LLM may sound sophisticated, however with the fitting instruments, it’s surprisingly straightforward. And the {hardware} necessities for a lot of fashions aren’t loopy. I’ve examined the choices offered on this article on two methods: a Dell PC with an Intel i9 processor, 64GB of RAM, and a Nvidia GeForce 12GB GPU (which seemingly wasn’t engaged operating a lot of this software program), and on a Mac with an M1 chip however simply 16GB of RAM.

Be suggested that it could take some research to discover a mannequin that performs fairly effectively on your process and runs in your desktop {hardware}. And, few could also be pretty much as good as what you are used to with a device like ChatGPT (particularly with GPT-4) or Claude.ai. Simon Willison, creator of the command-line device LLM, argued in a presentation final month that operating a neighborhood mannequin might be worthwhile even when its responses are mistaken:

[Some of] those that run in your laptop computer will hallucinate like wild— which I believe is definitely an ideal cause to run them, as a result of operating the weak fashions in your laptop computer is a a lot quicker means of understanding how these items work and what their limitations are.

It is also price noting that open supply fashions are prone to hold enhancing, and a few trade watchers count on the hole between them and business leaders to slender.

Run a neighborhood chatbot with GPT4All

If you need a chatbot that runs regionally and will not ship information elsewhere, GPT4All affords a desktop shopper for obtain that is fairly straightforward to arrange. It consists of choices for fashions that run by yourself system, and there are variations for Home windows, macOS, and Ubuntu.

While you open the GPT4All desktop software for the primary time, you will see choices to obtain round 10 (as of this writing) fashions that may run regionally. Amongst them is Llama-2-7B chat, a mannequin from Meta AI. You may as well arrange OpenAI’s GPT-3.5 and GPT-4 (if in case you have entry) for non-local use if in case you have an API key.

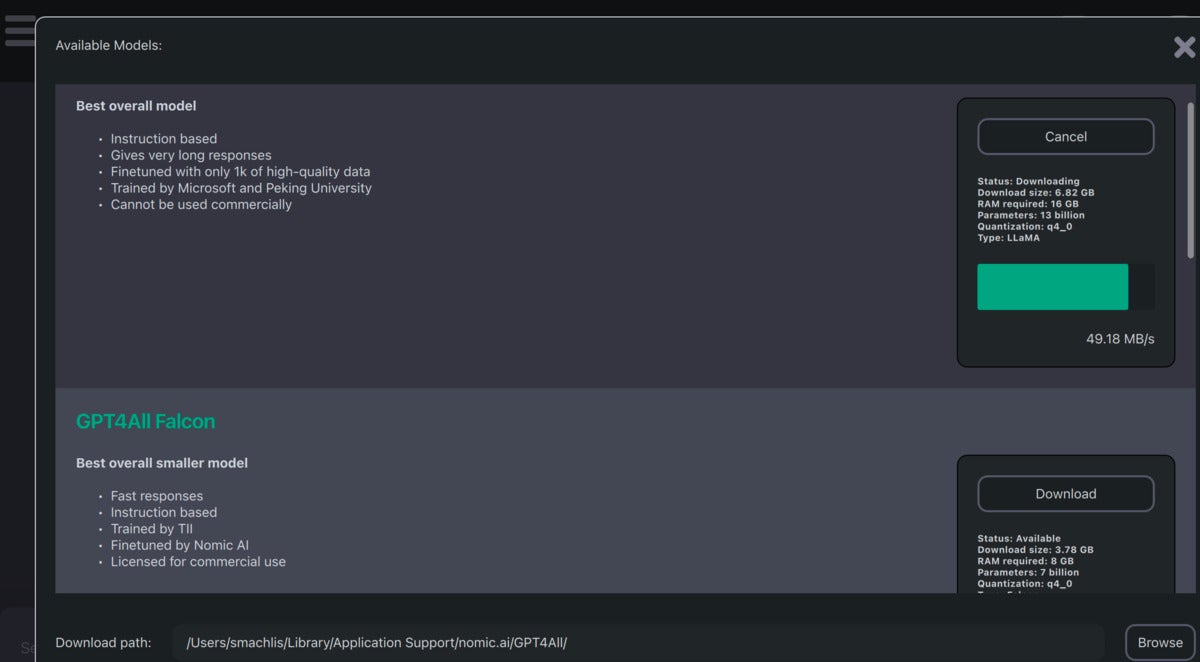

The model-download portion of the GPT4All interface was a bit complicated at first. After I downloaded a number of fashions, I nonetheless noticed the choice to obtain all of them. That steered the downloads did not work. Nevertheless, once I checked the obtain path, the fashions had been there.

Screenshot by Sharon Machlis for IDG

Screenshot by Sharon Machlis for IDGA portion of the mannequin obtain interface in GPT4All. As soon as I opened the utilization portion of the appliance, my downloaded fashions routinely appeared.

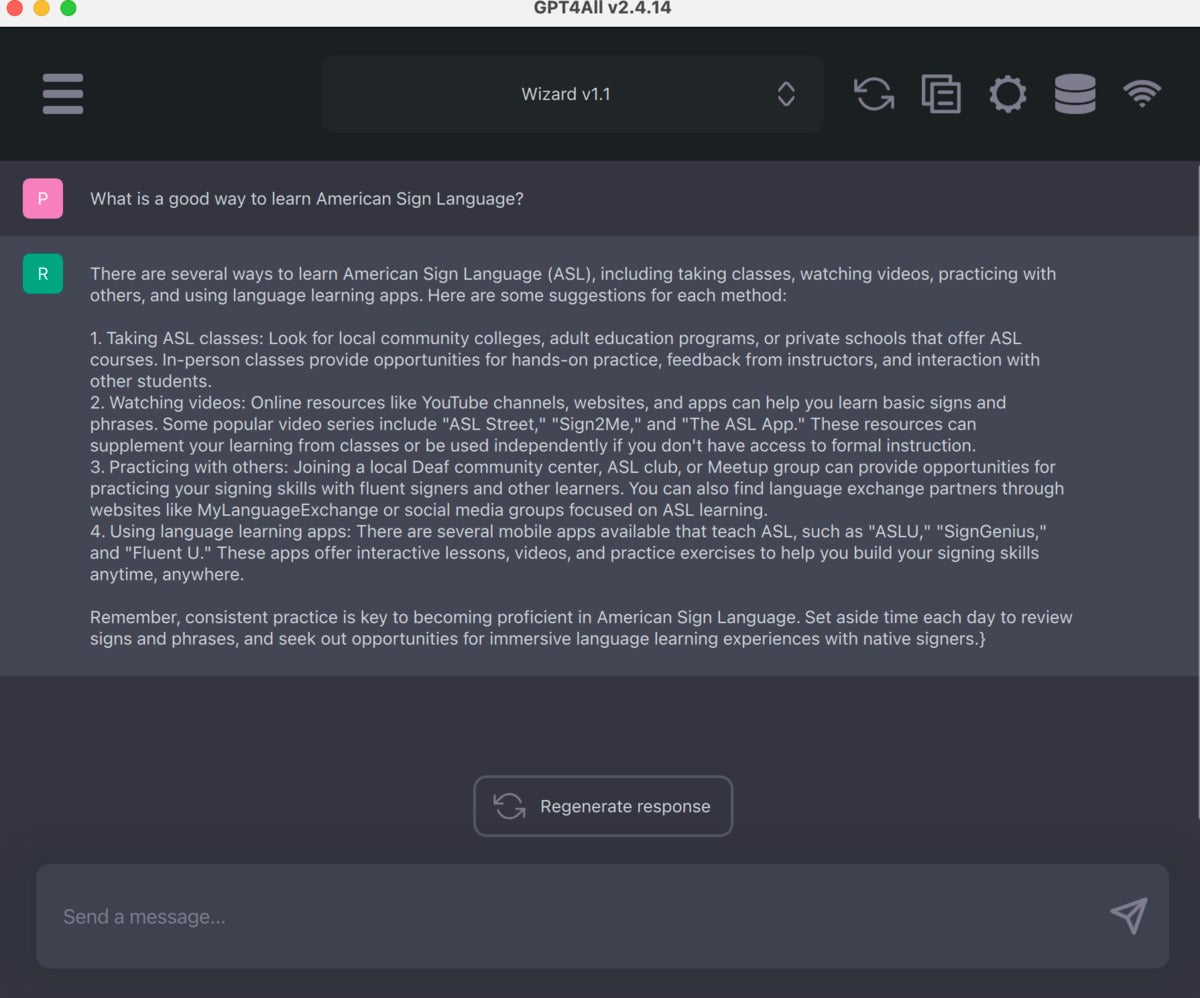

As soon as the fashions are arrange, the chatbot interface itself is clear and simple to make use of. Useful choices embrace copying a chat to a clipboard and producing a response.

Screenshot by Sharon Machlis for IDG

Screenshot by Sharon Machlis for IDGThe GPT4All chat interface is clear and simple to make use of.

There’s additionally a brand new beta LocalDocs plugin that permits you to “chat” with your personal paperwork regionally. You may allow it within the Settings > Plugins tab, the place you will see a “LocalDocs Plugin (BETA) Settings” header and an choice to create a group at a particular folder path.

The plugin is a piece in progress, and documentation warns that the LLM should “hallucinate” (make issues up) even when it has entry to your added skilled info. However, it is an attention-grabbing function that is seemingly to enhance as open supply fashions turn out to be extra succesful.

Along with the chatbot software, GPT4All additionally has bindings for Python, Node, and a command-line interface (CLI). There’s additionally a server mode that permits you to work together with the native LLM by way of an HTTP API structured very very similar to OpenAI’s. The objective is to allow you to swap in a neighborhood LLM for OpenAI’s by altering a few strains of code.

LLMs on the command line

LLM by Simon Willison is without doubt one of the simpler methods I’ve seen to obtain and use open supply LLMs regionally by yourself machine. Whilst you do want Python put in to run it, you should not want to the touch any Python code. In the event you’re on a Mac and use Homebrew, simply set up with

brew set up llm

In the event you’re on a Home windows machine, use your favourite means of putting in Python libraries, corresponding to

pip set up llm

LLM defaults to utilizing OpenAI fashions, however you should utilize plugins to run different fashions regionally. For instance, in case you set up the gpt4all plugin, you will have entry to extra native fashions from GPT4All. There are additionally plugins for llama, the MLC challenge, and MPT-30B, in addition to extra distant fashions.

Set up a plugin on the command line with llm set up model-name:

llm set up llm-gpt4all

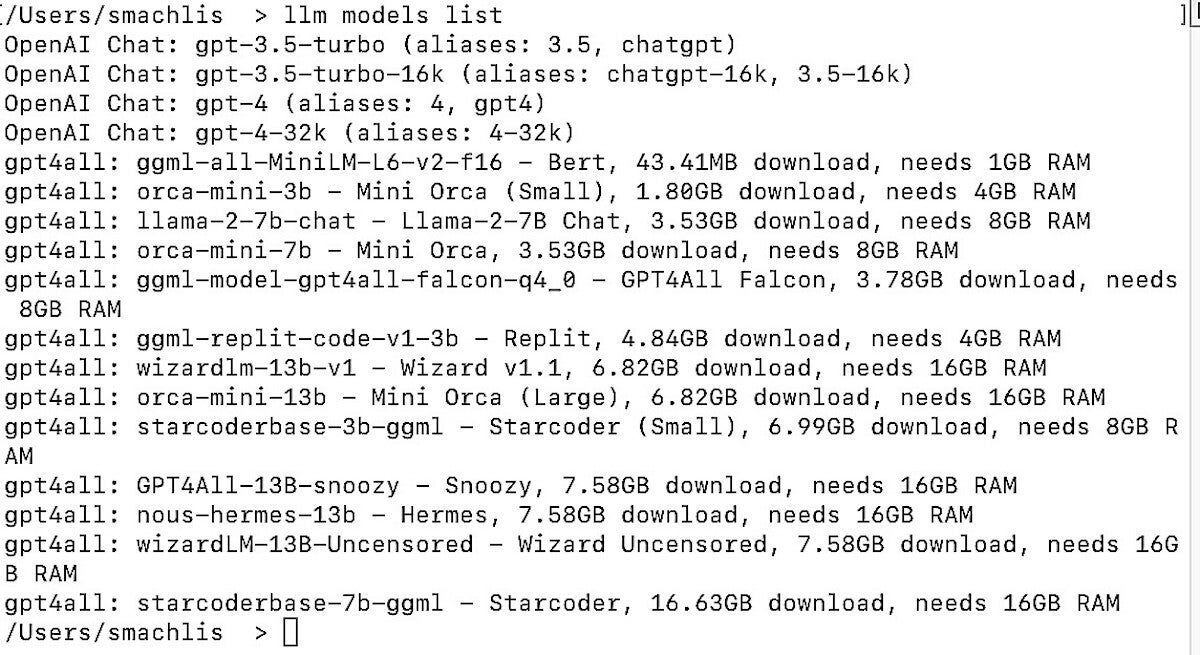

You may see all out there fashions—distant and those you’ve got put in, together with temporary data about each, with the command: llm fashions listing.

Screenshot by Sharon Machlis for IDG

Screenshot by Sharon Machlis for IDGThe show if you ask LLM to listing out there fashions.

To ship a question to a neighborhood LLM, use the syntax:

llm -m the-model-name "Your question"

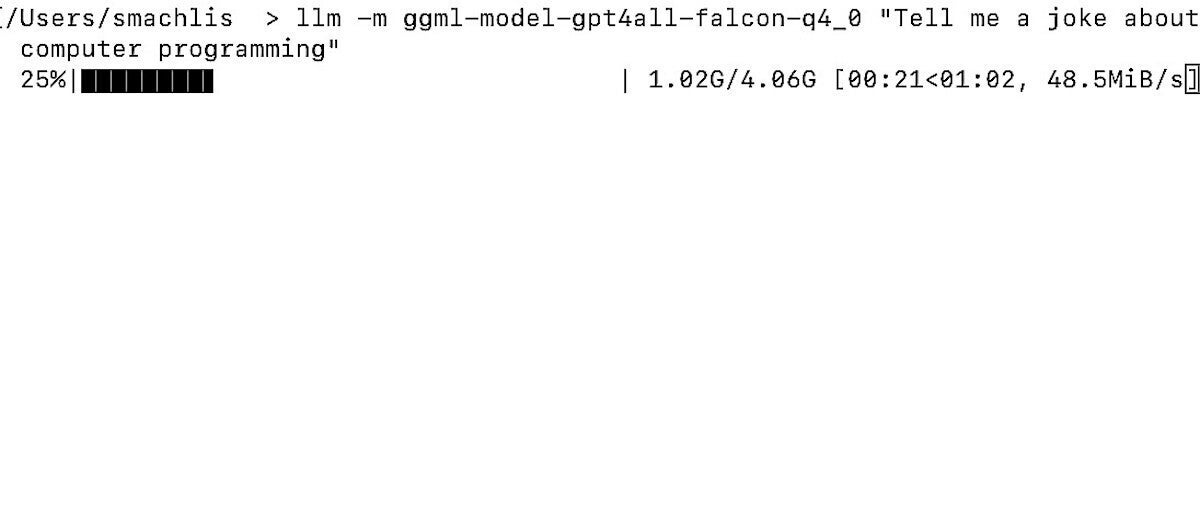

I then requested it a ChatGPT-like query with out issuing a separate command to obtain the mannequin:

llm -m ggml-model-gpt4all-falcon-q4_0 "Inform me a joke about pc programming"

That is one factor that makes the LLM consumer expertise so elegant: If the GPT4All mannequin would not exist in your native system, the LLM device routinely downloads it for you earlier than operating your question. You will see a progress bar within the terminal because the mannequin is downloading.

Screenshot by Sharon Machlis for IDG

Screenshot by Sharon Machlis for IDGLLM routinely downloaded the mannequin I utilized in a question.

The joke itself wasn’t excellent—”Why did the programmer flip off his pc? As a result of he wished to see if it was nonetheless working!”—however the question did, in actual fact, work. And if outcomes are disappointing, that is due to mannequin efficiency or insufficient consumer prompting, not the LLM device.

You may as well set aliases for fashions inside LLM, in an effort to discuss with them by shorter names:

llm aliases set falcon ggml-model-gpt4all-falcon-q4_0

To see all of your out there aliases, enter: llm aliases.

The LLM plugin for Meta’s Llama fashions requires a bit extra setup than GPT4All does. Learn the small print on the LLM plugin’s GitHub repo. Word that the general-purpose llama-2-7b-chat did handle to run on my work Mac with the M1 Professional chip and simply 16GB of RAM. It ran slightly slowly in contrast with the GPT4All fashions optimized for smaller machines with out GPUs, and carried out higher on my extra strong dwelling PC.

LLM has different options, corresponding to an argument flag that permits you to proceed from a previous chat and the flexibility to make use of it inside a Python script. And in early September, the app gained instruments for producing textual content embeddings, numerical representations of what the textual content signifies that can be utilized to seek for associated paperwork. You may see extra on the LLM web site. Willison, co-creator of the favored Python Django framework, hopes that others in the neighborhood will contribute extra plugins to the LLM ecosystem.

Llama fashions in your desktop: Ollama

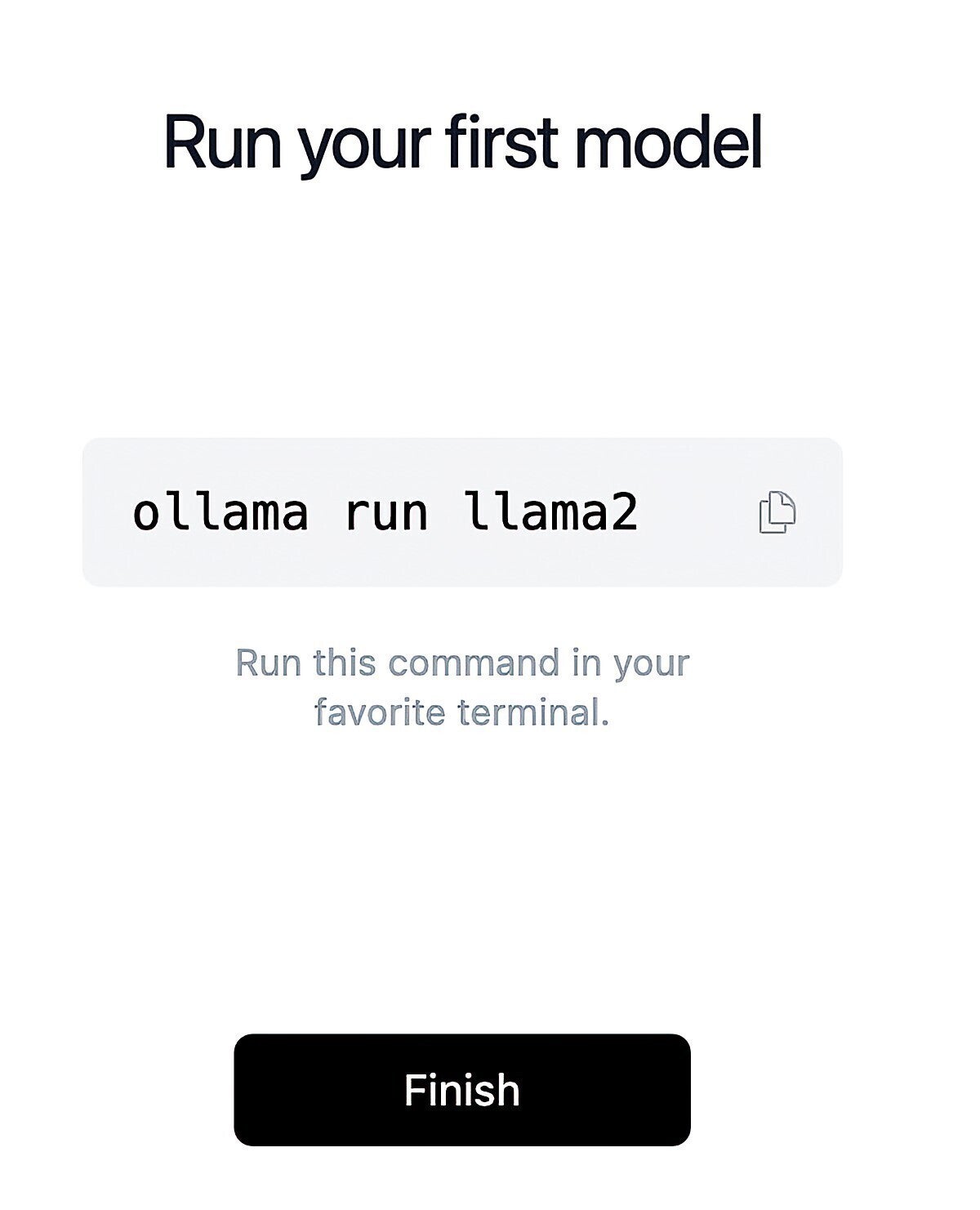

Ollama is a good simpler solution to obtain and run fashions than LLM. Nevertheless, the challenge was restricted to macOS and Linux till mid-February, when a preview model for Home windows lastly grew to become out there. I examined the Mac model.

Screenshot by Sharon Machlis for IDG

Screenshot by Sharon Machlis for IDGOrganising Ollama is very simple.

Set up is a chic expertise by way of point-and-click. And though Ollama is a command-line device, there’s only one command with the syntax ollama run model-name. As with LLM, if the mannequin is not in your system already, it would routinely obtain.

You may see the listing of obtainable fashions at https://ollama.ai/library, which as of this writing included a number of variations of Llama-based fashions corresponding to general-purpose Llama 2, Code Llama, CodeUp from DeepSE fine-tuned for some programming duties, and medllama2 that is been fine-tuned to reply medical questions.

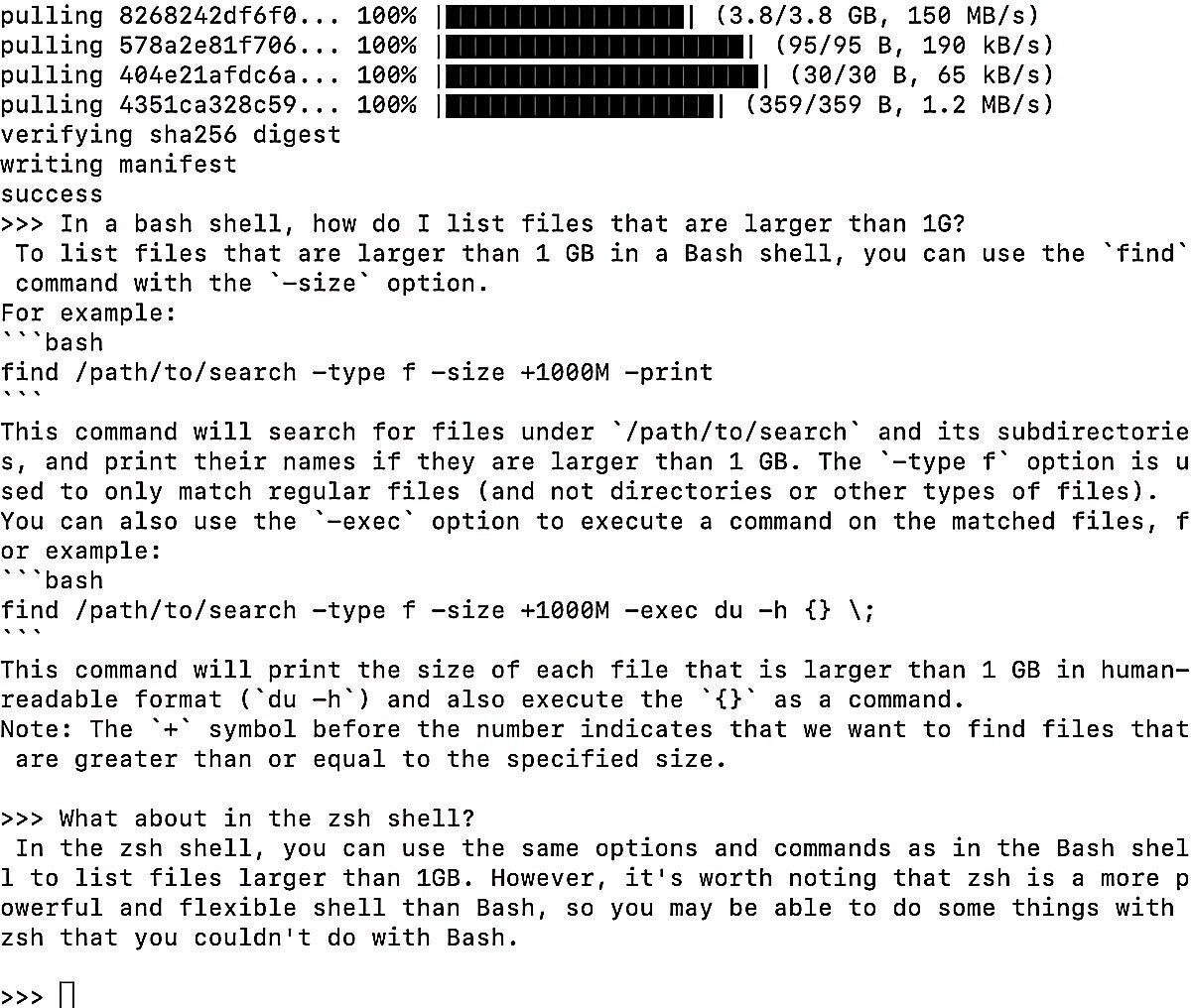

The Ollama GitHub repo’s README consists of useful listing of some mannequin specs and recommendation that “You must have at the least 8GB of RAM to run the 3B fashions, 16GB to run the 7B fashions, and 32GB to run the 13B fashions.” On my 16GB RAM Mac, the 7B Code Llama efficiency was surprisingly snappy. It should reply questions on bash/zsh shell instructions in addition to programming languages like Python and JavaScript.

Screenshot by Sharon Machlis for IDG

Screenshot by Sharon Machlis for IDGThe way it appears to be like operating Code Llama in an Ollama terminal window.

Regardless of being the smallest mannequin within the household, it was fairly good if imperfect at answering an R coding query that tripped up some bigger fashions: “Write R code for a ggplot2 graph the place the bars are metal blue colour.” The code was right besides for 2 further closing parentheses in two of the strains of code, which had been straightforward sufficient to identify in my IDE. I think the bigger Code Llama may have performed higher.

Ollama has some extra options, corresponding to LangChain integration and the flexibility to run with PrivateGPT, which is probably not apparent except you verify the GitHub repo’s tutorials web page.

In the event you’re on a Mac and wish to use Code Llama, you can have this operating in a terminal window and pull it up each time you’ve gotten a query. I am trying ahead to an Ollama Home windows model to make use of on my dwelling PC.

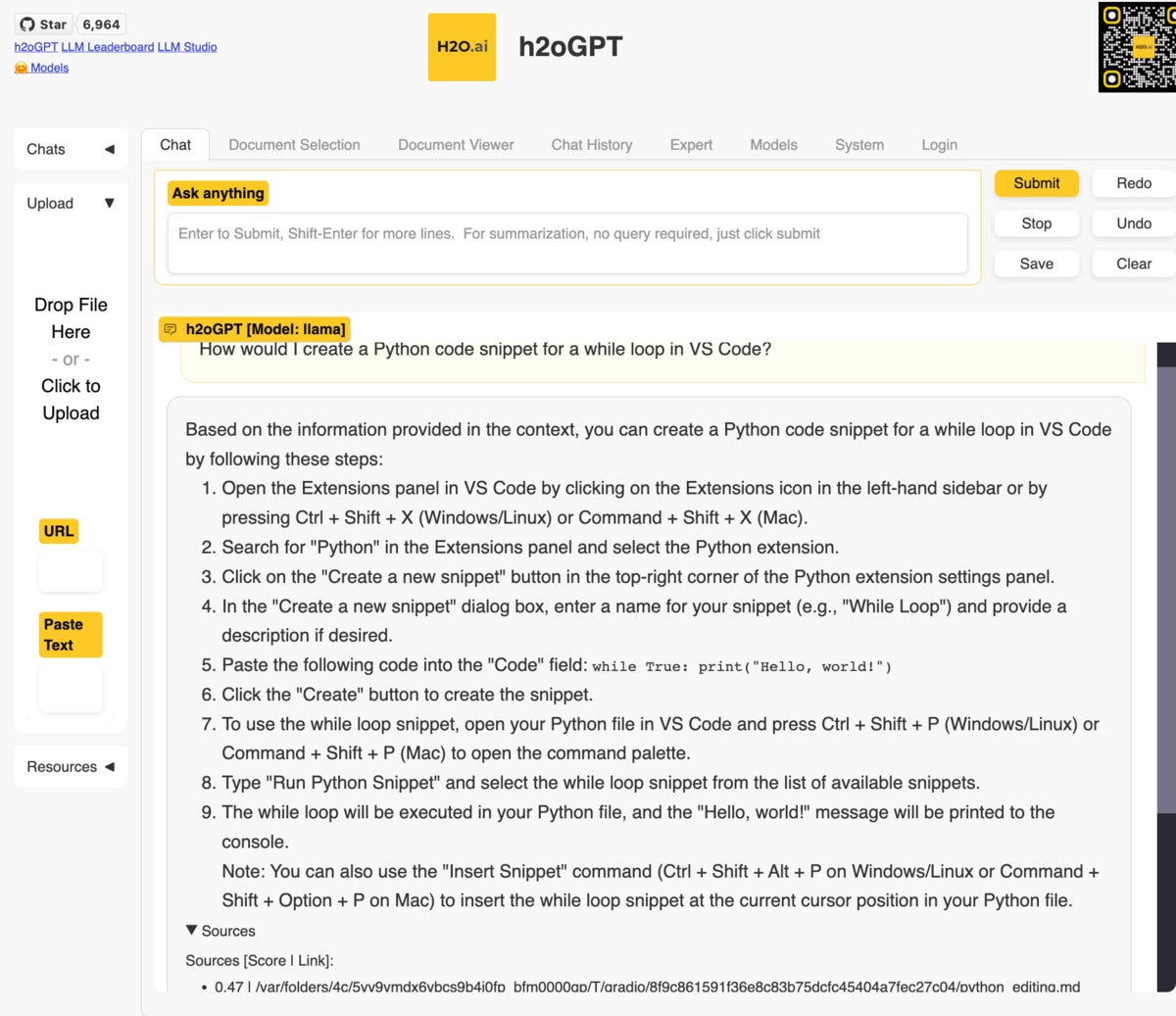

Chat with your personal paperwork: h2oGPT

H2O.ai has been engaged on automated machine studying for a while, so it is pure that the corporate has moved into the chat LLM area. A few of its instruments are greatest utilized by folks with information of the sector, however directions to put in a take a look at model of its h2oGPT chat desktop software had been fast and easy, even for machine studying novices.

You may entry a demo model on the internet (clearly not utilizing an LLM native to your system) at gpt.h2o.ai, which is a helpful solution to discover out in case you just like the interface earlier than downloading it onto your personal system.

You may obtain a primary model of the app with restricted means to question your personal paperwork by following setup directions right here.

Screenshot by Sharon Machlis for IDG

Screenshot by Sharon Machlis for IDGAn area LLaMa mannequin solutions questions primarily based on VS Code documentation.

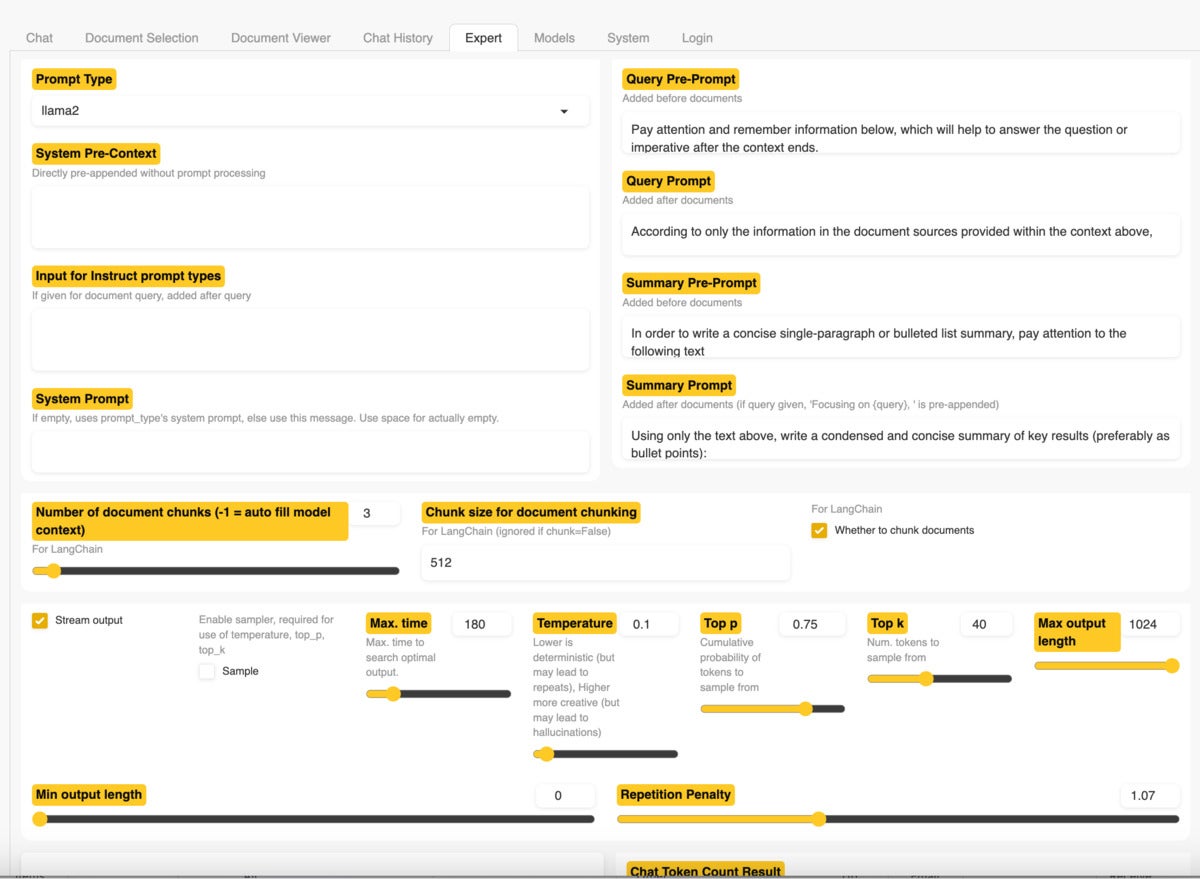

With out including your personal information, you should utilize the appliance as a basic chatbot. Or, you may add some paperwork and ask questions on these information. Suitable file codecs embrace PDF, Excel, CSV, Phrase, textual content, markdown, and extra. The take a look at software labored high-quality on my 16GB Mac, though the smaller mannequin’s outcomes did not examine to paid ChatGPT with GPT-4 (as at all times, that is a perform of the mannequin and never the appliance). The h2oGPT UI affords an Professional tab with plenty of configuration choices for customers who know what they’re doing. This offers extra skilled customers the choice to attempt to enhance their outcomes.

Screenshot by Sharon Machlis for IDG

Screenshot by Sharon Machlis for IDGExploring the Professional tab in h2oGPT.

If you need extra management over the method and choices for extra fashions, obtain the whole software. There are one-click installers for Home windows and macOS for methods with a GPU or with CPU-only. Word that my Home windows antivirus software program was sad with the Home windows model as a result of it was unsigned. I am conversant in H2O.ai’s different software program and the code is offered on GitHub, so I used to be prepared to obtain and set up it anyway.

Rob Mulla, now at at H2O.ai, posted a YouTube video on his channel about putting in the app on Linux. Though the video is a few months outdated now, and the appliance consumer interface seems to have modified, the video nonetheless has helpful data, together with useful explanations about H2O.ai LLMs.

Simple however sluggish chat together with your information: PrivateGPT

PrivateGPT can be designed to allow you to question your personal paperwork utilizing pure language and get a generative AI response. The paperwork on this software can embrace a number of dozen completely different codecs. And the README assures you that the information is “100% non-public, no information leaves your execution atmosphere at any level. You may ingest paperwork and ask questions with out an web connection!”

PrivateGPT options scripts to ingest information information, cut up them into chunks, create “embeddings” (numerical representations of the which means of the textual content), and retailer these embeddings in a neighborhood Chroma vector retailer. While you ask a query, the app searches for related paperwork and sends simply these to the LLM to generate a solution.

In the event you’re conversant in Python and find out how to arrange Python tasks, you may clone the total PrivateGPT repository and run it regionally. In the event you’re much less educated about Python, it’s possible you’ll wish to try a simplified model of the challenge that writer Iván Martínez arrange for a convention workshop, which is significantly simpler to arrange.

That model’s README file consists of detailed directions that do not assume Python sysadmin experience. The repo comes with a source_documents folder stuffed with Penpot documentation, however you may delete these and add your personal.