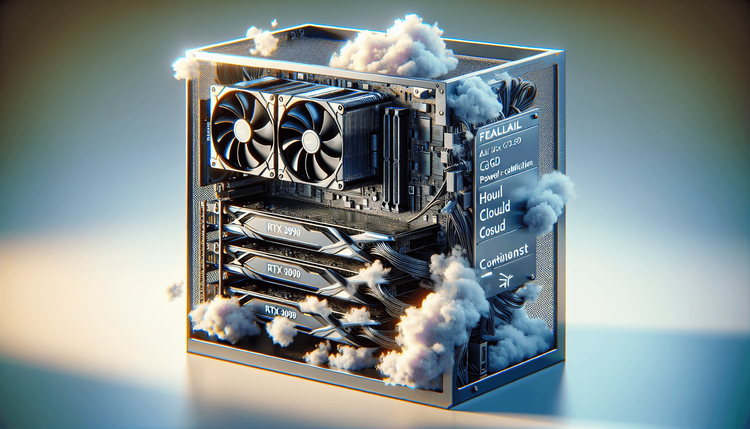

A hardware-focused tutorial on constructing a devoted AI inference server utilizing client elements. Give attention to the candy spot of twin used RTX 3090s or a single RTX 4090.

Key Sections:

1. **Part Choice:** Why VRAM is king. The idea of ‘VRAM per greenback’.

2. **The Construct:** Bodily meeting notes, cooling necessities for steady load.

3. **BIOS & OS Configuration:** PCIe bifurcation, Ubuntu Server optimizations, NVIDIA driver headless setup.

4. **Mannequin Partitioning:** Utilizing tensor parallelism to separate 70B+ fashions throughout client playing cards.

5. **Value vs Cloud:** ROI calculation exhibiting break-even level in opposition to GPT-4 API prices.

**Inside Linking Technique:** Hyperlink again to Pillar. Hyperlink natively to ‘Deploying Native LLMs to Kubernetes’ for subsequent steps.

Proceed studying

The $1,500 Native AI Server: DeepSeek-R1 on Shopper {Hardware}

on SitePoint.