Introduction

Throughout one of many cricket matches within the ICC World Cup T20 Championship, Rohit Sharma, Captain of Indian Cricket Group had applauded Jasprit Bumrah as Genius Bowler. I made a decision to run a experiment and try it out utilizing knowledge accessible publicly. Regardless that it’s a enjoyable challenge, I used to be pleasantly stunned by the outcomes. Allow us to get began.

Downside Definition

To do knowledge evaluation or constructing fashions, we have to convert enterprise drawback into knowledge drawback. How will we make our mannequin perceive which means of Genius. Properly, Genius might be outlined as “Method over or Head and Shoulders above the remaining”. Can we formulate this as an Anomaly detection drawback? Sure.

There are numerous methods to unravel Anomaly detection drawback. We might persist with AutoEncoders utilizing PyTorch.

We might use publicly accessible T20 Participant Statistics from cricket knowledge R bundle to coach our AutoEncoders mannequin. If AutoEncoders struggles to reconstruct values then Imply Sq. Error (MSE) could be excessive. MSE over a threshold could be an anomaly.

In our case, MSE for Jasprit Bumrah ought to be sufficiently excessive to be flagged as anomaly or Genius.

Studying Goals

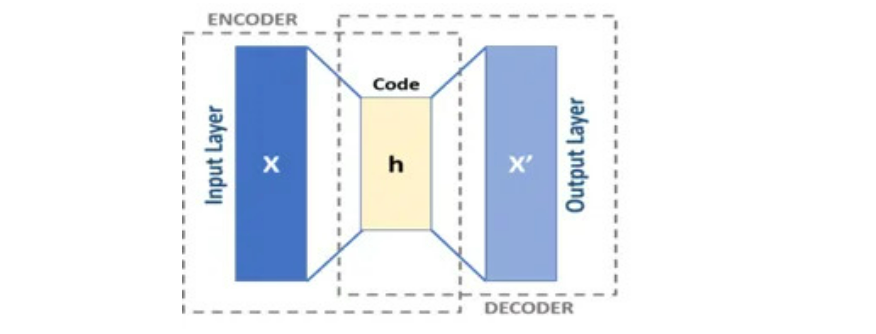

- Grasp the essential structure of AutoEncoders, together with the roles of the encoder and decoder networks.

- Perceive tips on how to make the most of reconstruction error (Imply Squared Error) from AutoEncoders to determine anomalies.

- Study to preprocess knowledge, create datasets, and arrange knowledge loaders for coaching and testing fashions.

- Perceive the method of coaching an AutoEncoders, together with setting hyperparameters, loss capabilities, and optimizers.

- Discover real-world functions of anomaly detection, comparable to buyer administration and fraud detection.

This text was printed as part of the Information Science Blogathon.

What are AutoEncoders?

AutoEncoders are composed of two networks Encoder and Decoder. Encoder receives D dimensional vector V and encodes right into a vector X of M dimension whereby M < D. Therefore, Encoder compresses our Enter. Decoder decompresses X and tries to recreate V so far as attainable. Allow us to name output of Decoder as Z.

Sometimes, Decoder would capable of recreate V for many of rows i.e for many rows Z could be nearer to V. However for sure rows, Decoder would wrestle to decode and distinction between Z and V could be big. We might name these values Anomaly. Anomaly values normally have excessive Imply Squared Error or MSE.

Actual World Functions of AutoEncoder

Allow us to now discover actual world functions of AutoEncoder.

Buyer Administration

Suppose a Group offers with lot of consumers and has a strategy to label Prospects nearly as good or dangerous, clear or dangerous, rich or non wealthly. Auto Encoder when skilled solely on good or clear or wealthly clients can decipher sample on these prime or supreme clients. When a brand new buyer is available in we’ve a dependable solution to know the way totally different is the brand new buyer from supreme buyer. You could argue that it may be completed manually. People are restricted by quantity of variables and knowledge they’ll deal with. Machines would not have this limitation.

Fraud Administration

Much like above, if a group has methodology to label transactions as fraudulent or non fraudulent. We are able to practice our Autoencoder on Non-Fradulent transactions alone and in manufacturing environments, we’ve a dependable mechanism to know the way totally different the brand new transaction from supreme transaction.

Above is just not exhaustive listing of utility of AutoEncoder.

Allow us to now return to our unique drawback.

Information Assortment, Cleansing and Function Engineering

I collected T20 profession statistics knowledge of bowlers right here.

I used R library cricketdata to obtain participant T20 Profession Statistics as python model of the identical is just not accessible so far as i do know. T20 Statistics doesn’t embody leagues like IPL.

library(cricketdata)

# T20 Profession Information

t20_career <- fetch_cricinfo("T20", "males", "Bowling",'profession')

# T20 Innings Information

t20_innings <- fetch_cricinfo("T20", "males", "Bowling",'innings')We have to be a part of each these datasets and create last enter dataset for use for coaching AutoEncoders in Python. Earlier than saving the file to disk we have to think about solely Take a look at Enjoying International locations for our Evaluation.

last<-final[Country %in% c('Australia','West Indies','South Africa'

,'Pakistan','Afghanistan','India'

,'Sri Lanka','England','New Zealand'

,'BAN')]

fwrite(last,'T20_Stats_Career.txt',sep="|")We are able to title the ultimate dataset as “T20_Stats_Career.txt”.

Now we’ll use Python for our remainder of Evaluation.

import numpy as np

import torch

import torch.optim as optim

import numpy as np

import torch

import torch.optim as optim

import torch.nn as nn

from torch.utils.knowledge import TensorDataset,DataLoader

from sklearn.preprocessing import StandardScaler

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

import randomNow we have imported all vital libraries. We might now learn Participant’s knowledge.

df = pd.read_csv('T20_Stats_Career.txt',sep='|')

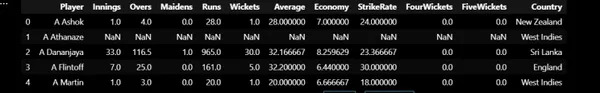

df.head()First 5 Rows of the dataset is given beneath:

For each participant, we’ve knowledge of Variety of Innings, Overs, Maidens, Runs, Wickets, Common, Financial system and Strike Price.

Function Engineering

I’ve added two new options:

- Maiden Proportion: No of Maidens / No of Overs

- Wickets Per Over: No of Wickets / No of Overs

df['Maiden_PCT'] = df['Maidens'] / df['Overs'] * 100

df['Wickets_Per_over'] = df['Wickets'] / df['Overs']We additionally must drop Gamers with Variety of Innings lower than 15 in order that we use solely these gamers with ample match expertise for our Evaluation.

Practice and Take a look at Dataset

Practice Dataset: Practice Datasets would have T20 Statistics of gamers from nationalities aside from India.

Take a look at Dataset: Solely Indian Gamers.

# Create Practice and Take a look at Dataset

take a look at = df[df['Country'] == 'India']

practice = df[df['Country'] != 'India']We use the next options to coach our Mannequin:

- Common

- Financial system

- Strike Price

- No of 4 Wickets

- No of 5 Wickets

- Maiden Proportion

- Wickets Per Over

Drop Pointless Options

options = ['Average','Economy','StrikeRate','FourWickets','FiveWickets'

,'Maiden_PCT','Wickets_Per_over']

X_train = practice[features]

X_test = take a look at[features]

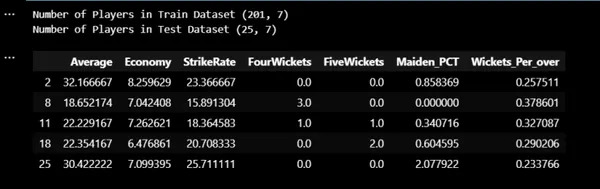

print("Variety of Gamers in Practice Dataset",X_train.form)

print("Variety of Gamers in Take a look at Dataset",X_test.form)

X_train.head()

Information Standarization

Now we have practice and take a look at dataset. Now we have to standardize the information.

sc = StandardScaler()

sc.match(X_train)

X_train = sc.rework(X_train)

X_test = sc.rework(X_test)Mannequin Coaching

We now set acceptable machine and set knowledge loaders with batch measurement of 16.

# Create Tensor Dataset and Dataloders

machine="cuda" if torch.cuda.is_available() else 'cpu'

torch.manual_seed(13)

x_train_tensor = torch.as_tensor(X_train).float().to(machine)

y_train_tensor = torch.as_tensor(X_train).float().to(machine)

x_test_tensor = torch.as_tensor(X_test).float().to(machine)

y_test_tensor = torch.as_tensor(X_test).float().to(machine)

train_dataset = TensorDataset(x_train_tensor,y_train_tensor)

test_dataset = TensorDataset(x_test_tensor,y_test_tensor)

train_loader = DataLoader(dataset=train_dataset,batch_size=16,shuffle=True)

test_loader = DataLoader(dataset=test_dataset,batch_size=16)We set AutoEncoders Structure as beneath:

7 ->4->2->4->7.

As we’re coping with very much less knowledge we’d construct a easy mannequin.

We use Studying Price as 0.001 and Adam as Optimizer.

# AutoEncoder Structure

class AutoEncoder(nn.Module):

def __init__(self):

tremendous(AutoEncoder,self).__init__()

self.encoder = nn.Sequential()

self.encoder.add_module('Hidden1',nn.Linear(7,4))

self.encoder.add_module('Relu1',nn.ReLU())

self.encoder.add_module('Hidden2',nn.Linear(4,2))

self.decoder = nn.Sequential()

self.decoder.add_module('Hidden3',nn.Linear(2,4))

self.decoder.add_module('Relu2',nn.ReLU())

self.decoder.add_module('Hidden4',nn.Linear(4,7))

def ahead(self,x):

encoder = self.encoder(x)

return self.decoder(encoder)

# Predict Technique

def predict(mannequin,x):

mannequin.eval()

x_tensor = torch.as_tensor(x).float()

y_hat = mannequin(x_tensor.to(machine))

mannequin.practice()

return y_hat.detach().cpu().numpy()

# Plot Losses

def plot_losses(train_losses,test_losses):

fig = plt.determine(figsize=(10,4))

plt.plot(train_losses,label="training_loss",c="b")

#plt.plot(self.val_losses,label="val loss",c="r")

if test_loader:

plt.plot(test_losses,label="take a look at loss",c="r")

#plt.yscale('log')

plt.xlabel('Epochs')

plt.ylabel('Loss')

plt.legend()

plt.tight_layout()

return fig

# Mannequin Loss and Optimizer

lr = 0.001

torch.manual_seed(21)

mannequin = AutoEncoder().to(machine)

optimizer = optim.Adam(mannequin.parameters(),lr = lr)

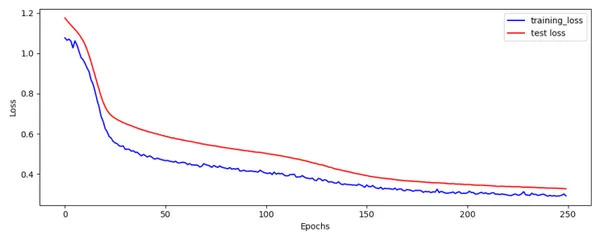

loss_fn =nn.MSELoss()We practice our mannequin for 250 epochs.

num_epochs=250

train_loss=[]

test_loss=[]

seed=42

torch.backends.cudnn.deterministic=True

torch.backends.cudnn.benchmark=False

torch.manual_seed(seed)

np.random.seed(seed)

random.seed(seed)

for epoch in vary(num_epochs):

mini_batch_train_loss=[]

mini_batch_test_loss=[]

for train_batch,y_train in train_loader:

train_batch =train_batch.to(machine)

mannequin.practice()

yhat = mannequin(train_batch)

loss = loss_fn(yhat,y_train)

mini_batch_train_loss.append(loss.cpu().detach().numpy())

loss.backward()

optimizer.step()

optimizer.zero_grad()

train_epoch_loss = np.imply(mini_batch_train_loss)

train_loss.append(train_epoch_loss)

with torch.no_grad():

for test_batch,y_test in test_loader:

test_batch = test_batch.to(machine)

mannequin.eval()

yhat = mannequin(test_batch)

loss = loss_fn(yhat,y_test)

mini_batch_test_loss.append(loss.cpu().detach().numpy())

test_epoch_loss = np.imply(mini_batch_test_loss)

test_loss.append(test_epoch_loss)

fig = plot_losses(train_loss,test_loss)

fig.savefig('Train_Test_Loss.png')

Practice and Take a look at Loss plot seems OK.

Imply Squared Error (MSE)

Utilizing Predict Perform we are able to predict for Practice Dataset. We then compute Imply Squared Error by squaring distinction between Actuals and Predicted. Additionally we’ll compute Z-Rating utilizing imply and customary deviation of MSE.

# Predict Practice Dataset and get error

train_pred = predict(mannequin,X_train)

print(train_pred.form)

error = np.imply(np.energy(X_train - train_pred,2),axis=1)

print(error.form)

practice['error'] = error

mean_error = np.imply(practice['error'])

std_error =np.std(practice['error'])

practice['zscore'] = (practice['error'] - mean_error) / std_error

practice = practice.sort_values(by='error').reset_index()

practice.to_csv('Train_Output.txt',sep="|",index=None)

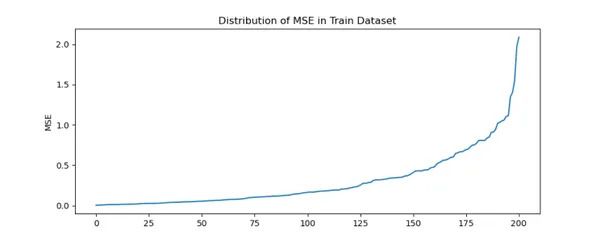

fig = plt.determine(figsize=(10,4))

plt.title('Distribution of MSE in Practice Dataset')

practice['error'].plot(form='line')

plt.ylabel('MSE')

plt.present()

fig.savefig('Train_MSE.png')

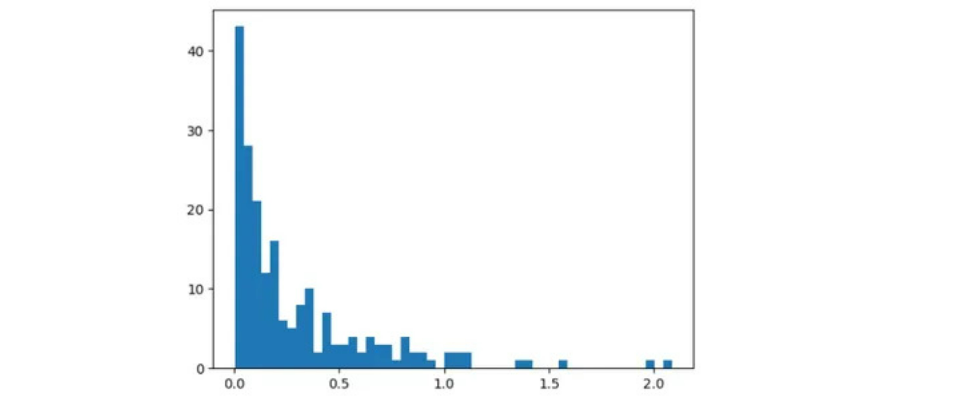

We are able to infer there may be steep enhance in MSE for sure gamers.

Majority of gamers are inside MSE of 1. Past 1.2 MSE there are solely few gamers.

Prime 3 Gamers within the practice dataset with highest MSE are:

practice.tail(3)Please understand that we’re utilizing Tail Perform

We are able to infer that for some gamers auto encoder struggles to reconstruct unique values leading to excessive MSE.

By taking a look at above plots, we are able to set threshold to be 1.2.

I agree that we have to cut up knowledge into practice and validation and use knowledge of validation dataset to set threshold. However on this case we’ve solely 200 rows. We’re pressured to take this method.

Take a look at Dataset – Indian Gamers or Bowlers

Allow us to now compute Imply Squared Error and ZScore for Take a look at Information.

# Predict Take a look at Dataset and get error

test_pred = predict(mannequin,X_test)

test_error = np.imply(np.energy(X_test - test_pred,2),axis=1)

take a look at['error'] = test_error

take a look at['zscore'] = (take a look at['error'] - mean_error) / std_error

take a look at = take a look at.sort_values(by='error').reset_index()

take a look at.to_csv('Test_Output.txt',sep="|",index=None)

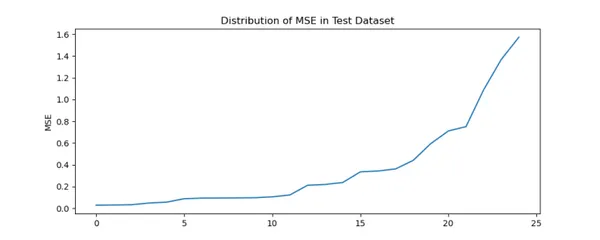

fig = plt.determine(figsize=(10,4))

plt.title('Distribution of MSE in Take a look at Dataset')

take a look at['error'].plot(form='line')

plt.ylabel('MSE')

plt.present()

fig.savefig('Test_MSE.png')

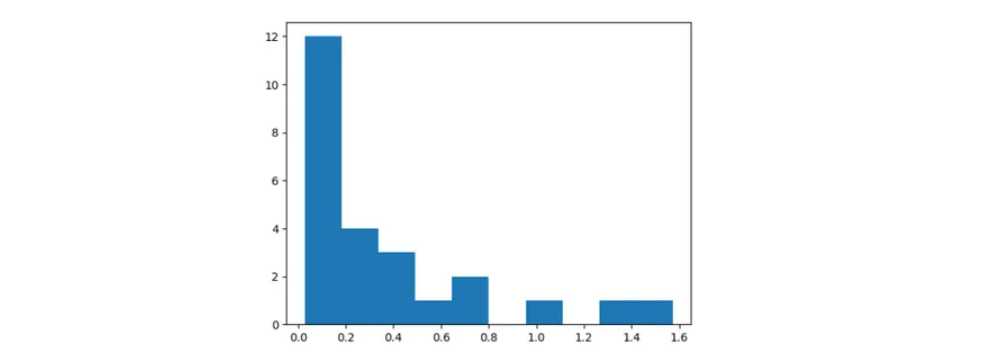

Much like Practice Dataset there may be steep enhance in MSE for sure Indian Gamers.

take a look at.tail(3)Please understand that we’re utilizing Tail Perform. Therefore Right order is Kuldeep Yadav, JJ Bumrah and Harbhajan Singh.

As in practice dataset, we create a brand new column named Error in take a look at dataset which has MSE values. Much like Practice Dataset, Autoencoder is struggling to reconstruct unique values for some Indian Gamers.

Utilizing Practice MSE we’ve computed imply and customary deviation. For every worth in take a look at dataset we compute Z-Rating as (take a look at error – practice imply error) / practice error customary deviation.

We are able to confirm that Z-Rating for Bumrah is greater than 3 which signifies Anomaly or Genius.

MSE Breakdown or Drill Down

Allow us to now study in regards to the MSE breakdown for the gamers.

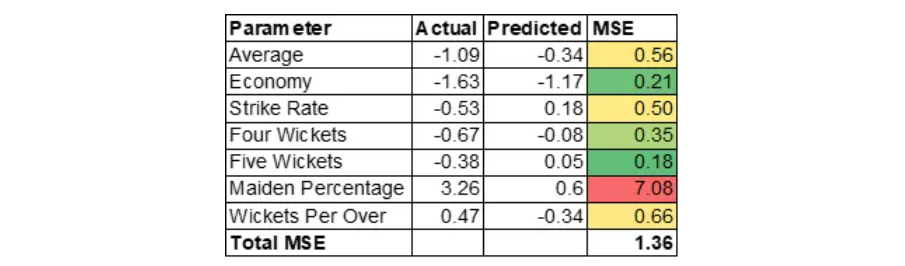

Jasprit Bumrah

Allow us to now, perceive why MSE is excessive for Jasprit Bumrah. MSE of Jasprit Bumrah is 1.36. Allow us to drill down additional on the MSE at variable stage to grasp contributing components.

MSE is calculated as (Precise – Predicted) * (Precise – Predicted).

Please notice that we’re coping with standardized values. Motive for the excessive MSE is generally contributed by excessive Maiden Proportion. This implies Bumrah could be an impressive bowler at nineteenth or twentieth over of the innings. Excessive Maiden Proportion would create stress on batsman which may end up in different bowlers taking wickets within the subsequent over. Please notice that variable Maiden Proportion is was created by Function Engineering.

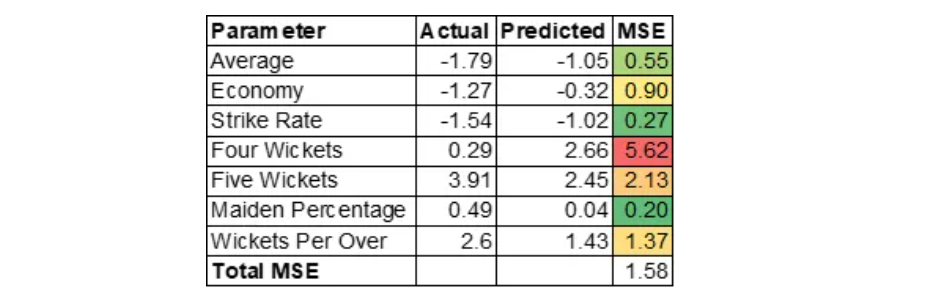

Kuldeep Yadav

Kuldeep Yadav has uncanny potential of selecting up wickets which will likely be helpful in center overs. Auto Encoder over predicted 4 wickets variable.

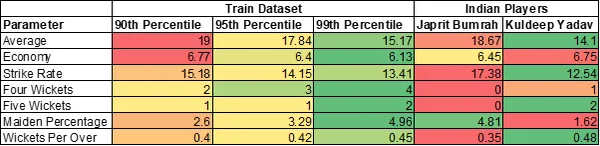

General Statistics of Prime 2 Indian Bowlers

Jasprit Bumrah has 2 variables in additional than ninetieth percentile. Kuldeep Yadav has 4 variables in additional than 99th percentile.

Hope to see Kuldeep Yadav in motion quickly.

Yow will discover full code right here.

Conclusion

AutoEncoder is a robust software in a single’s arsenal for Anomaly Detection however it isn’t the one technique. We are able to additionally think about using ML algorithms like Isolation Forest or different less complicated strategies. Coming again to our drawback, we are able to infer that AutoEncoder is ready to appropriately determine Anomalies. Hardest half in Anomaly Detection is to persuade stakeholders of the explanations of the Anomaly. Right here we computed drill down of MSE to determine causes for the Anomaly. These insights are as vital as detecting anomaly itself. Explainable AI is vital.

Key Takeaways

- We used R Bundle cricketdata to obtain T20 Participant Statistics for take a look at enjoying nations and save the information to disk.

- Do Function Engineering by computing Maiden Proportion and Wickets Per Over.

- Utilizing PyTorch we’d practice Auto Encoder mannequin for 250 epochs on the Practice Dataset. We use optimizer as Adam and set studying fee to 0.001.

- Compute Imply Sq. Error by computing distinction between Precise and Prediction in each practice and take a look at dataset.

- We think about Reconstruction error past a sure threshold as an anomaly. In our case it’s 1.2.

- By taking a look at break up of MSE we are able to infer that Bumrah excels in bowling Maidens which is gold in T20.

Ceaselessly Requested Questions

A. Sure we are able to use ML Strategies like Isolation Forest or different Less complicated strategies to unravel this drawback. I’ve used AutoEncoder only for Illustration.

A. Sure, output is totally different when skilled utilizing totally different seeds as knowledge is small.Fundamental motive of this weblog is to exhibit utility of AutoEncoder and the way it may be used for producing insights to help determination making.

A. Coaching time is lower than a Minute.

A. Deep Studying Framework shouldn’t matter. All of it is dependent upon the framework one is snug with.

The media proven on this article is just not owned by Analytics Vidhya and is used on the Writer’s discretion.