This text will delve into what RAG guarantees and its sensible actuality. We’ll discover how RAG works, its potential advantages, after which share firsthand accounts of the challenges we’ve encountered, the options we’ve developed, and the unresolved questions we proceed to research. Via this, you’ll achieve a complete understanding of RAG’s capabilities and its evolving function in advancing AI.

Think about you’re chatting with somebody who’s not solely out of contact with present occasions but additionally susceptible to confidently making issues up after they’re not sure. This situation mirrors the challenges with conventional generative AI: whereas educated, it depends on outdated knowledge and infrequently “hallucinates” particulars, resulting in errors delivered with unwarranted certainty.

Retrieval-augmented era (RAG) transforms this situation. It’s like giving that individual a smartphone with entry to the newest info from the Web. RAG equips AI programs to fetch and combine real-time knowledge, enhancing the accuracy and relevance of their responses. Nonetheless, this know-how isn’t a one-stop answer; it navigates uncharted waters with no uniform technique for all situations. Efficient implementation varies by use case and infrequently requires navigating by trial and error.

What’s RAG and How Does It Work?

Retrieval-Augmented Era (RAG) is an AI method that guarantees to considerably improve the capabilities of generative fashions by incorporating exterior, up-to-date info in the course of the response era course of. This methodology equips AI programs to provide responses that aren’t solely correct but additionally extremely related to present contexts, by enabling them to entry the newest knowledge accessible.

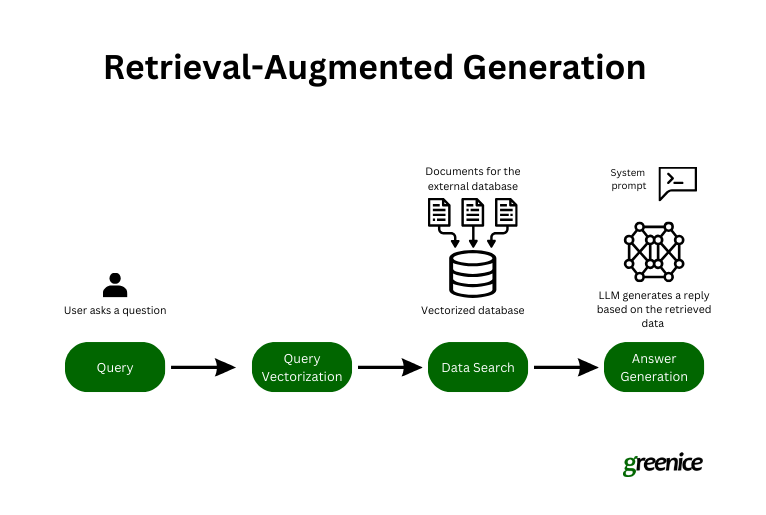

Right here’s an in depth take a look at every step concerned:

-

Initiating the question. The method begins when a consumer poses a query to an AI chatbot. That is the preliminary interplay, the place the consumer brings a selected matter or question to the AI.

-

Encoding for retrieval. The question is then remodeled into textual content embeddings. These embeddings are digital representations of the question that encapsulate the essence of the query in a format that the mannequin can analyze computationally.

-

Discovering related knowledge. The retrieval part of RAG takes over, utilizing the question embeddings to carry out a semantic search throughout a dataset. This search just isn’t about matching key phrases however understanding the intent behind the question and discovering knowledge that aligns with this intent.

-

Producing the reply. With the related exterior knowledge built-in, the RAG generator crafts a response that mixes the AI’s educated data with the newly retrieved, particular info. This ends in a response that’s not solely knowledgeable but additionally contextually related.

RAG Improvement Course of

Growing a retrieval-augmented era system for generative AI entails a number of key steps to make sure it not solely retrieves related info but additionally integrates it successfully to reinforce responses. Right here’s a streamlined overview of the method:

-

Amassing {custom} knowledge. Step one is gathering the exterior knowledge your AI will entry. This entails compiling a various and related dataset that corresponds to the subjects the AI will deal with. Sources may embrace textbooks, tools manuals, statistical knowledge, and mission documentation to type the factual foundation for the AI’s responses.

-

Chunking and formatting knowledge. As soon as collected, the information wants preparation. Chunking breaks down giant datasets into smaller, extra manageable segments for simpler processing.

-

Changing knowledge to embeddings (vectors). This entails changing the information chunks into embeddings, additionally referred to as vectors — dense numerical representations that assist the AI analyze and examine knowledge effectively.

-

Growing the information search. The system makes use of superior search algorithms, together with semantic search, to transcend mere key phrase matching. It makes use of natural-language processing (NLP) to understand the intent behind queries and retrieve essentially the most related knowledge, even when the consumer’s terminology isn’t exact.

-

Making ready system prompts. The ultimate step entails crafting prompts that information how the massive language mannequin (LLM) makes use of the retrieved knowledge to formulate responses. These prompts assist be certain that the AI’s output just isn’t solely informative but additionally contextually aligned with the consumer’s question.

These steps define the best course of for RAG growth. Nonetheless, sensible implementation typically requires further changes and optimizations to fulfill particular mission objectives, as challenges can come up at any stage of the method.

The Guarantees of RAG

RAG’s guarantees are twofold. On the one hand, it goals to simplify how customers discover solutions, enhancing their expertise by offering extra correct and related responses. This improves the general course of, making it simpler and extra intuitive for customers to get the knowledge they want. Alternatively, RAG permits companies to completely exploit their knowledge by making huge shops of data readily searchable, which may result in higher decision-making and insights.

Accuracy enhance

Accuracy stays a important limitation in giant language fashions), which may manifest in a number of methods:

-

False info. When not sure, LLMs may current believable however incorrect info.

-

Outdated or generic responses. Customers searching for particular and present info typically obtain broad or outdated solutions.

-

Non-authoritative sources. LLMs generally generate responses primarily based on unreliable sources.

-

Terminology confusion. Completely different sources might use related terminology in numerous contexts, resulting in inaccurate or confused responses.

With RAG, you possibly can tailor the mannequin to attract from the fitting knowledge, guaranteeing that responses are each related and correct for the duties at hand.

Conversational search

RAG is ready to reinforce how we seek for info, aiming to outperform conventional search engines like google like Google by permitting customers to seek out essential info by a human-like dialog somewhat than a sequence of disconnected search queries. This guarantees a smoother and extra pure interplay, the place the AI understands and responds to queries throughout the move of a traditional dialogue.

Actuality test

Nonetheless interesting the guarantees of RAG might sound, it’s essential to do not forget that this know-how just isn’t a cure-all. Whereas RAG can provide plain advantages, it’s not the reply to all challenges. We’ve carried out the know-how in a number of tasks, and we’ll share our experiences, together with the obstacles we’ve confronted and the options we’ve discovered. This real-world perception goals to supply a balanced view of what RAG can really provide and what stays a piece in progress.

Actual-world RAG Challenges

Implementing retrieval-augmented era in real-world situations brings a singular set of challenges that may deeply impression AI efficiency. Though this methodology boosts the possibilities of correct solutions, good accuracy isn’t assured.

Our expertise with an influence generator upkeep mission confirmed vital hurdles in guaranteeing the AI used retrieved knowledge appropriately. Usually, it might misread or misapply info, leading to deceptive solutions.

Moreover, dealing with conversational nuances, navigating in depth databases, and correcting AI “hallucinations” when it invents info complicate RAG deployment additional.

These challenges spotlight that RAG should be custom-fitted for every mission, underscoring the continual want for innovation and adaptation in AI growth.

Accuracy just isn’t assured

Whereas RAG considerably improves the chances of delivering the proper reply, it’s essential to acknowledge that it doesn’t assure 100% accuracy.

In our sensible functions, we’ve discovered that it’s not sufficient for the mannequin to easily entry the fitting info from the exterior knowledge sources we’ve offered; it should additionally successfully make the most of that info. Even when the mannequin does use the retrieved knowledge, there’s nonetheless a danger that it would misread or distort this info, making it much less helpful and even inaccurate.

For instance, once we developed an AI assistant for energy generator upkeep, we struggled to get the mannequin to seek out and use the fitting info. The AI would often “spoil” the precious knowledge, both by misapplying it or altering it in ways in which detracted from its utility.

This expertise highlighted the complicated nature of RAG implementation, the place merely retrieving info is simply step one. The true job is integrating that info successfully and precisely into the AI’s responses.

Nuances of conversational search

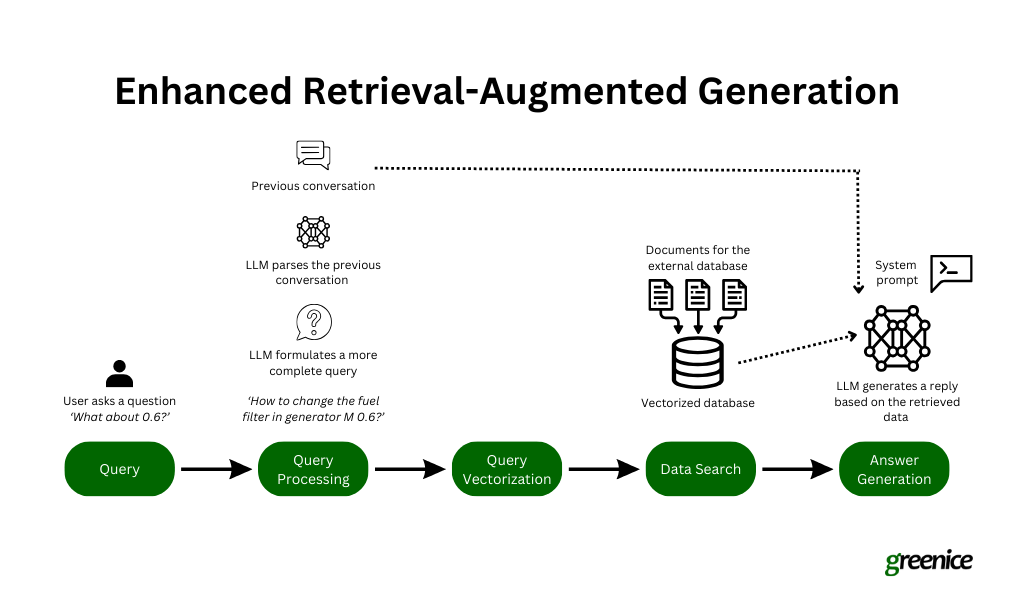

There’s a giant distinction between trying to find info utilizing a search engine and chatting with a chatbot. When utilizing a search engine, you often make certain your query is well-defined to get the very best outcomes. However in a dialog with a chatbot, questions might be much less formal and incomplete, like saying, “And what about X?” For instance, in our mission creating an AI assistant for energy generator upkeep, a consumer may begin by asking about one generator mannequin after which out of the blue change to a different one.

Dealing with these fast adjustments and abrupt questions requires the chatbot to know the complete context of the dialog, which is a serious problem. We discovered that RAG had a tough time discovering the fitting info primarily based on the continued dialog.

To enhance this, we tailored our system to have the underlying LLM rephrase the consumer’s question utilizing the context of the dialog earlier than it tries to seek out info. This method helped the chatbot to higher perceive and reply to incomplete questions and made the interactions extra correct and related, though it’s not good each time.

Database navigation

Navigating huge databases to retrieve the fitting info is a big problem in implementing RAG. As soon as we now have a well-defined question and perceive what info is required, the subsequent step isn’t nearly looking out; it’s about looking out successfully. Our expertise has proven that making an attempt to comb by a complete exterior database just isn’t sensible. In case your mission consists of tons of of paperwork, every doubtlessly spanning tons of of pages, the amount turns into unmanageable.

To deal with this, we’ve developed a technique to streamline the method by first narrowing our focus to the particular doc more likely to comprise the wanted info. We use metadata to make this potential — assigning clear, descriptive titles and detailed descriptions to every doc in our database. This metadata acts like a information, serving to the mannequin to shortly determine and choose essentially the most related doc in response to a consumer’s question.

As soon as the fitting doc is pinpointed, we then carry out a vector search inside that doc to find essentially the most pertinent part or knowledge. This focused method not solely quickens the retrieval course of but additionally considerably enhances the accuracy of the knowledge retrieved, guaranteeing that the response generated by the AI is as related and exact as potential. This technique of refining the search scope earlier than delving into content material retrieval is essential for effectively managing and navigating giant databases in RAG programs.

Hallucinations

What occurs if a consumer asks for info that isn’t accessible within the exterior database? Primarily based on our expertise, the LLM may invent responses. This subject — referred to as hallucination — is a big problem, and we’re nonetheless engaged on options.

For example, in our energy generator mission, a consumer may inquire a few mannequin that isn’t documented in our database. Ideally, the assistant ought to acknowledge the lack of awareness and state its incapability to help. Nonetheless, as a substitute of doing this, the LLM generally pulls details about an identical mannequin and presents it as if it have been related. As of now, we’re exploring methods to deal with this subject to make sure the AI reliably signifies when it can not present correct info primarily based on the information accessible.

Discovering the “proper” method

One other essential lesson from our work with RAG is that there’s no one-size-fits-all answer for its implementation. For instance, the profitable methods we developed for the AI assistant in our energy generator upkeep mission didn’t translate on to a distinct context.

We tried to use the identical RAG setup to create an AI assistant for our gross sales staff, geared toward streamlining onboarding and enhancing data switch. Like many different companies, we wrestle with an unlimited array of inside documentation that may be tough to sift by. The aim was to deploy an AI assistant to make this wealth of data extra accessible.

Nonetheless, the character of the gross sales documentation — geared extra in direction of processes and protocols somewhat than technical specs — differed considerably from the technical tools manuals used within the earlier mission. This distinction in content material sort and utilization meant that the identical RAG strategies didn’t carry out as anticipated. The distinct traits of the gross sales paperwork required a distinct method to how info was retrieved and offered by the AI.

This expertise underscored the necessity to tailor RAG methods particularly to the content material, goal, and consumer expectations of every new mission, somewhat than counting on a common template.

Key Takeaways and RAG’s Future

As we replicate on the journey by the challenges and intricacies of retrieval-augmented era, a number of key classes emerge that not solely underscore the know-how’s present capabilities but additionally trace at its evolving future.

-

Adaptability is essential. The various success of RAG throughout totally different tasks demonstrates the need for adaptability in its utility. A one-size-fits-all method doesn’t suffice, as a result of numerous nature of knowledge and necessities in every mission.

-

Steady enchancment. Implementing RAG requires ongoing adjustment and innovation. As we’ve seen, overcoming obstacles like hallucinations, enhancing conversational search, and refining knowledge navigation are important to harnessing RAG’s full potential.

-

Significance of knowledge administration. Efficient knowledge administration, notably in organizing and getting ready knowledge, proves to be a cornerstone for profitable implementation. This consists of meticulous consideration to how knowledge is chunked, formatted, and made searchable.

Trying Forward: The Way forward for RAG

-

Enhanced contextual understanding. Future developments in RAG purpose to higher deal with the nuances of dialog and context. Advances in NLP and machine studying might result in extra subtle fashions that perceive and course of consumer queries with better precision.

-

Broader implementation. As companies acknowledge the advantages of constructing their knowledge extra accessible and actionable, RAG might see broader implementation throughout varied industries, from healthcare to customer support and past.

-

Revolutionary options to present challenges. Ongoing analysis and growth are more likely to yield modern options to present limitations, such because the hallucination subject, thereby enhancing the reliability and trustworthiness of AI assistants.

In conclusion, whereas RAG presents a promising frontier in AI know-how, it’s not with out its challenges. The highway forward would require persistent innovation, tailor-made methods, and an open-minded method to completely understand the potential of RAG in making AI interactions extra correct, related, and helpful.