Convey this challenge to life

The latest paper on MIGC: Multi-Occasion Technology Controller for Textual content-to-Picture Synthesis, launched on 24 February 2024, introduces a brand new activity known as Multi-Occasion Technology (MIG). The purpose right here is to create a number of objects in a picture, every with particular attributes and positioned precisely. The novel strategy, Multi-Occasion Technology Controller (MIGC), breaks down this activity into smaller components and makes use of an consideration mechanism to make sure exact rendering of every object. Subsequent, these rendered objects are mixed to create the ultimate picture. A benchmark known as COCO-MIG is created to guage the fashions on this activity, and the experiments present that this strategy offers distinctive management over the amount, place, attributes, and interactions of the generated objects.

This text offers an in depth overview on the paper MIGC and likewise features a Paperspace demo to offer a arms on experiment with MIGC.

Introduction

Secure diffusion has been well-known for textual content to picture technology. Additional, it has proven exceptional capabilities throughout numerous domains resembling images, portray, and others. Nevertheless these researches largely concentrates on Single-Occasion Technology. Nevertheless, sensible situations requires simultaneous technology of a number of situations inside a single picture, with a management over amount, place, attributes, and interactions, stay largely unexplored. This examine dives deeper into the broader activity of Multi-Occasion Technology (MIG), aiming to deal with the complexities related to producing numerous situations inside a unified framework.

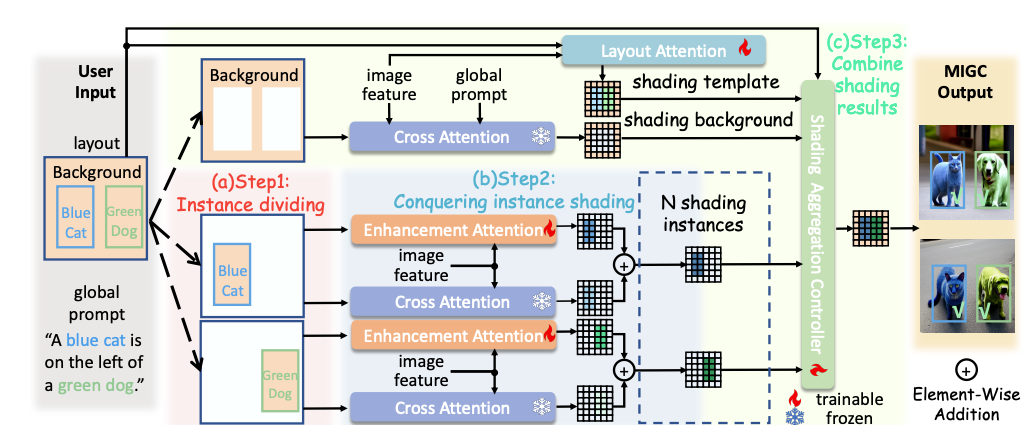

Motivated by the divide and conquer technique, we suggest the Multi-Occasion Technology Controller (MIGC) strategy. This strategy goals to decompose MIG into a number of subtasks after which combines the outcomes of these subtasks. – Authentic Analysis Paper

MIGC consists of three steps:

1) Divide: MIGC breaks down Multi-Occasion Technology (MIG) into particular person occasion shading subtasks throughout the Cross-Consideration layers of SD. This strategy accelerates the decision of every subtask, enhancing picture concord within the generated photographs.

2) Conquer: MIGC, makes use of a particular layer known as Enhancement Consideration Layer to enhance the shading outcomes from the fastened Cross-Consideration. This ensures that every occasion receives correct shading.

3) Mix: MIGC, will get the shading template utilizing a Structure Consideration layer. Then, they mix it with the shading background and shading situations, sending them to a Shading Aggregation Controller to get the ultimate shading end result.

A complete experiment was performed with COCO-MIG, COCO, and DrawBench. The COCO-MIG technique considerably improved the Occasion Success Price from 32.39% to 58.43%. When utilized to the COCO benchmark, this strategy notably elevated the Common Precision (AP) from 40.68/68.26/42.85 to 54.69/84.17/61.71. Equally, on DrawBench, the noticed enhancements in place, attribute, and depend, notably boosting the attribute success fee from 48.20% to 97.50%. Moreover, as talked about on the analysis paper MIGC maintains an identical inference pace to the unique secure diffusion.

Evaluation and Outcomes

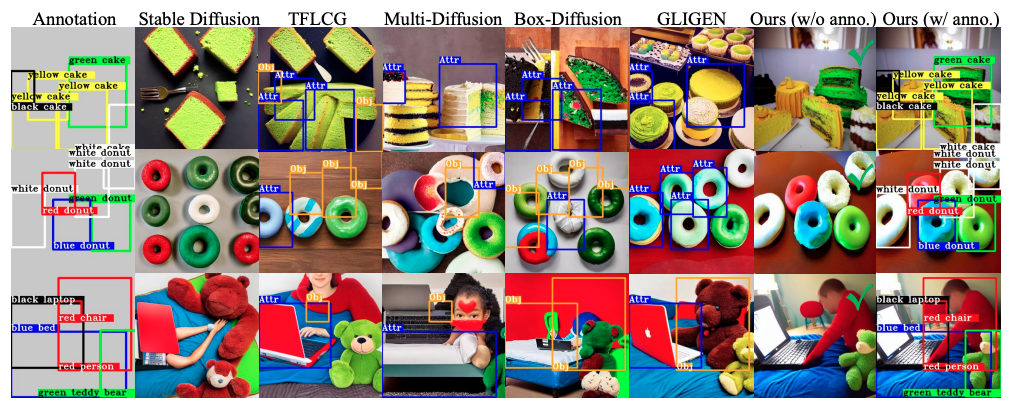

In Multi-Occasion Technology (MIG), customers present the technology fashions with a world immediate (P), bounding bins for every occasion (B), and descriptions for every occasion (D). Primarily based on these inputs, the mannequin has to create a picture (I) the place every occasion inside its field ought to match its description, and all situations ought to align accurately throughout the picture. Prior technique resembling secure diffusion struggled with attribute leakage which incorporates textual leakage and spatial leakage.

The above picture compares the MIGC strategy with different baseline strategies on the COCO-MIG dataset. Particularly, a yellow bounding field marked “Obj” is used to indicate situations the place the place is inaccurately generated, and a blue bounding field labeled “Attr” to point situations the place attributes are incorrectly generated.

The experimental findings from the analysis demonstrates that MIGC excels in attribute management, significantly concerning coloration, whereas sustaining exact management over the positioning of situations.

In assessing positions, Grounding-DINO was used to check detected bins with the bottom fact, marking situations with IoU above 0.5 as “Place Accurately Generated.” For attributes, if an occasion is accurately positioned, Grounded-SAM was used to measure the share of the goal coloration within the HSV house, labeling situations with a share above 0.2 as “Absolutely Accurately Generated.”

On COCO-MIG, the principle focus was on Occasion Success Price and mIoU, with IoU set to 0 if coloration is wrong. For COCO-Place, Success Price, mIoU, and Grounding-DINO AP rating for Spatial Accuracy, alongside FID for Picture High quality, CLIP rating, and Native CLIP rating for Picture-Textual content Consistency was measured.

For DrawBench, Success Price was assessed for place and depend accuracy and verify for proper coloration attribution. Handbook evaluations complement automated metrics.

Paperspace Demo

Convey this challenge to life

Use the hyperlink supplied with the article to begin the pocket book on Paperspace and observe the tutorial.

- Clone the repo, set up and replace the required libraries

!git clone https://github.com/limuloo/MIGC.git

%cd MIGC!pip set up --upgrade --no-cache-dir gdown

!pip set up transformers

!pip set up torch

!pip set up speed up

!pip set up pyngrok

!pip set up opencv-python

!pip set up einops

!pip set up diffusers==0.21.1

!pip set up omegaconf

!pip set up -U transformers!pip set up -e .- Import the required modules

import yaml

from diffusers import EulerDiscreteScheduler

from migc.migc_utils import seed_everything

from migc.migc_pipeline import StableDiffusionMIGCPipeline, MIGCProcessor, AttentionStore

import os

- Obtain the pre-trained weights and save them to your listing

!gdown --id 1v5ik-94qlfKuCx-Cv1EfEkxNBygtsz0T -O ./pretrained_weights/MIGC_SD14.ckpt

!gdown --id 1cmdif24erg3Pph3zIZaUoaSzqVEuEfYM -O ./migc_gui_weights/sd/cetusMix_Whalefall2.safetensors

!gdown --id 1Z_BFepTXMbe-cib7Lla5A224XXE1mBcS -O ./migc_gui_weights/clip/text_encoder/pytorch_model.bin- Create the perform ‘offlinePipelineSetupWithSafeTensor’

This perform units up a pipeline for offline processing, integrating MIGC and CLIP fashions for textual content and picture processing.

def offlinePipelineSetupWithSafeTensor(sd_safetensors_path):

migc_ckpt_path="/notebooks/MIGC/pretrained_weights/MIGC_SD14.ckpt"

clip_model_path="/notebooks/MIGC/migc_gui_weights/clip/text_encoder"

clip_tokenizer_path="/notebooks/MIGC/migc_gui_weights/clip/tokenizer"

original_config_file="/notebooks/MIGC/migc_gui_weights/v1-inference.yaml"

ctx = init_empty_weights if is_accelerate_available() else nullcontext

with ctx():

# text_encoder = CLIPTextModel(config)

text_encoder = CLIPTextModel.from_pretrained(clip_model_path)

tokenizer = CLIPTokenizer.from_pretrained(clip_tokenizer_path)

pipe = StableDiffusionMIGCPipeline.from_single_file(sd_safetensors_path,

original_config_file=original_config_file,

text_encoder=text_encoder,

tokenizer=tokenizer,

load_safety_checker=False)

print('Initializing pipeline')

pipe.attention_store = AttentionStore()

from migc.migc_utils import load_migc

load_migc(pipe.unet , pipe.attention_store,

migc_ckpt_path, attn_processor=MIGCProcessor)

pipe.scheduler = EulerDiscreteScheduler.from_config(pipe.scheduler.config)

return pipe- Name the perform

offlinePipelineSetupWithSafeTensor()with the argument'./migc_gui_weights/sd/cetusMix_Whalefall2.safetensors'to arrange the pipeline. Publish establishing the pipeline, we are going to switch all the pipeline, to the CUDA machine for GPU acceleration utilizing the.to("cuda")technique.

pipe = offlinePipelineSetupWithSafeTensor('./migc_gui_weights/sd/cetusMix_Whalefall2.safetensors')

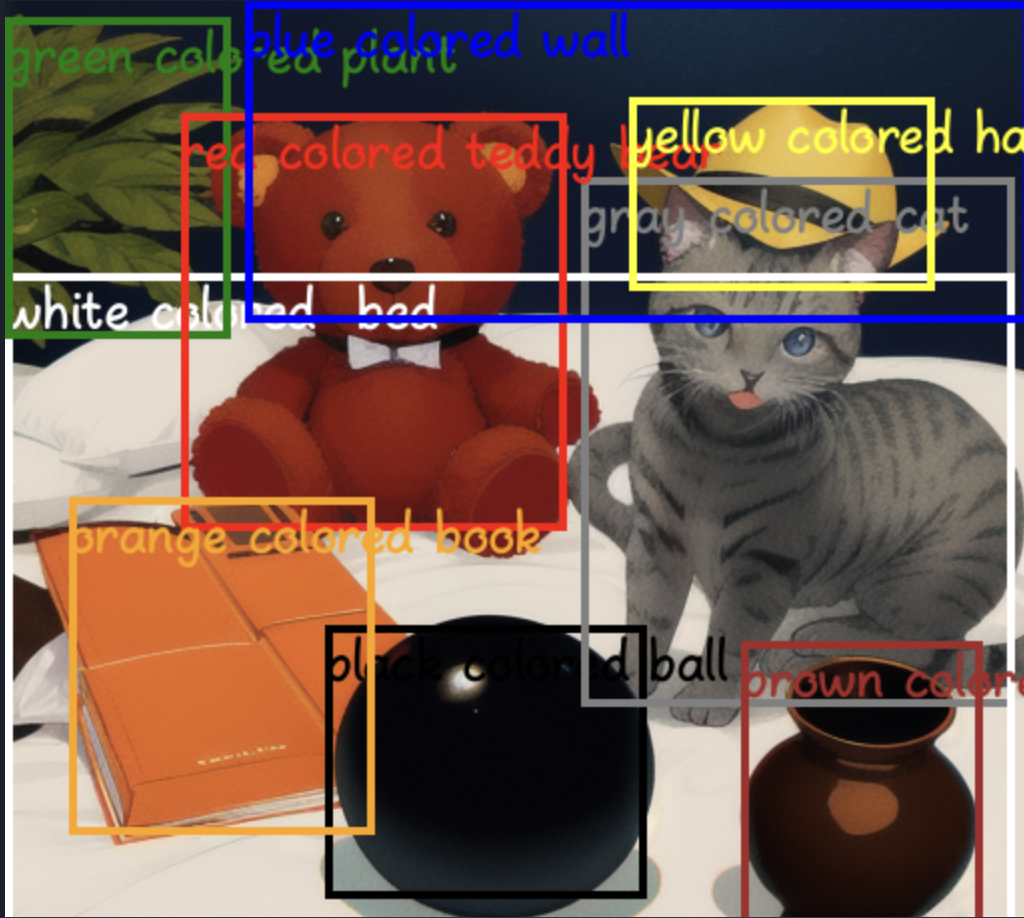

pipe = pipe.to("cuda")- We’ll use the created pipeline to generate a picture. The created picture relies on the supplied prompts and the bounding bins, the perform annotates the generated picture with bounding bins and descriptions, and saves/show the outcomes.

prompt_final = [['masterpiece, best quality,black colored ball,gray colored cat,white colored bed,

green colored plant,red colored teddy bear,blue colored wall,brown colored vase,orange colored book,

yellow colored hat', 'black colored ball', 'gray colored cat', 'white colored bed', 'green colored plant',

'red colored teddy bear', 'blue colored wall', 'brown colored vase', 'orange colored book', 'yellow colored hat']]

bboxes = [[[0.3125, 0.609375, 0.625, 0.875], [0.5625, 0.171875, 0.984375, 0.6875],

[0.0, 0.265625, 0.984375, 0.984375], [0.0, 0.015625, 0.21875, 0.328125],

[0.171875, 0.109375, 0.546875, 0.515625], [0.234375, 0.0, 1.0, 0.3125],

[0.71875, 0.625, 0.953125, 0.921875], [0.0625, 0.484375, 0.359375, 0.8125],

[0.609375, 0.09375, 0.90625, 0.28125]]]

negative_prompt="worst high quality, low high quality, unhealthy anatomy, watermark, textual content, blurry"

seed = 7351007268695528845

seed_everything(seed)

picture = pipe(prompt_final, bboxes, num_inference_steps=30, guidance_scale=7.5,

MIGCsteps=15, aug_phase_with_and=False, negative_prompt=negative_prompt, NaiveFuserSteps=30).photographs[0]

picture.save('output1.png')

picture.present()

picture = pipe.draw_box_desc(picture, bboxes[0], prompt_final[0][1:])

picture.save('anno_output1.png')

picture.present()

We extremely suggest our readers to click on the hyperlink and entry the whole pocket book and additional experiment with the codes.

Conclusion

This analysis goals to deal with a difficult activity known as “MIG” and introduce an answer known as MIGC to boost the efficiency of secure diffusion in dealing with MIG duties. One of many novel thought makes use of the technique of divide and conquer which breaks down advanced MIG activity into less complicated duties, specializing in shading particular person objects. Every object’s shading is additional enhanced utilizing an consideration layer, after which mixed with all of the shaded objects utilizing one other consideration layer and a controller. A number of experiments are performed utilizing the proposed COCO-MIG dataset in addition to broadly used benchmarks like COCO-Place and Drawbench. We experimented with MIGC and the outcomes present that MIGC is environment friendly and efficient.

We hope you loved studying the article as a lot as we loved writing about it and experimenting with Paperspace Platform.