Chatbots are utilized by hundreds of thousands of individuals all over the world day-after-day, powered by NVIDIA GPU-based cloud servers. Now, these groundbreaking instruments are coming to Home windows PCs powered by NVIDIA RTX for native, quick, customized generative AI.

Chat with RTX, now free to obtain, is a tech demo that lets customers personalize a chatbot with their very own content material, accelerated by a neighborhood NVIDIA GeForce RTX 30 Collection GPU or increased with at the very least 8GB of video random entry reminiscence, or VRAM.

Ask Me Something

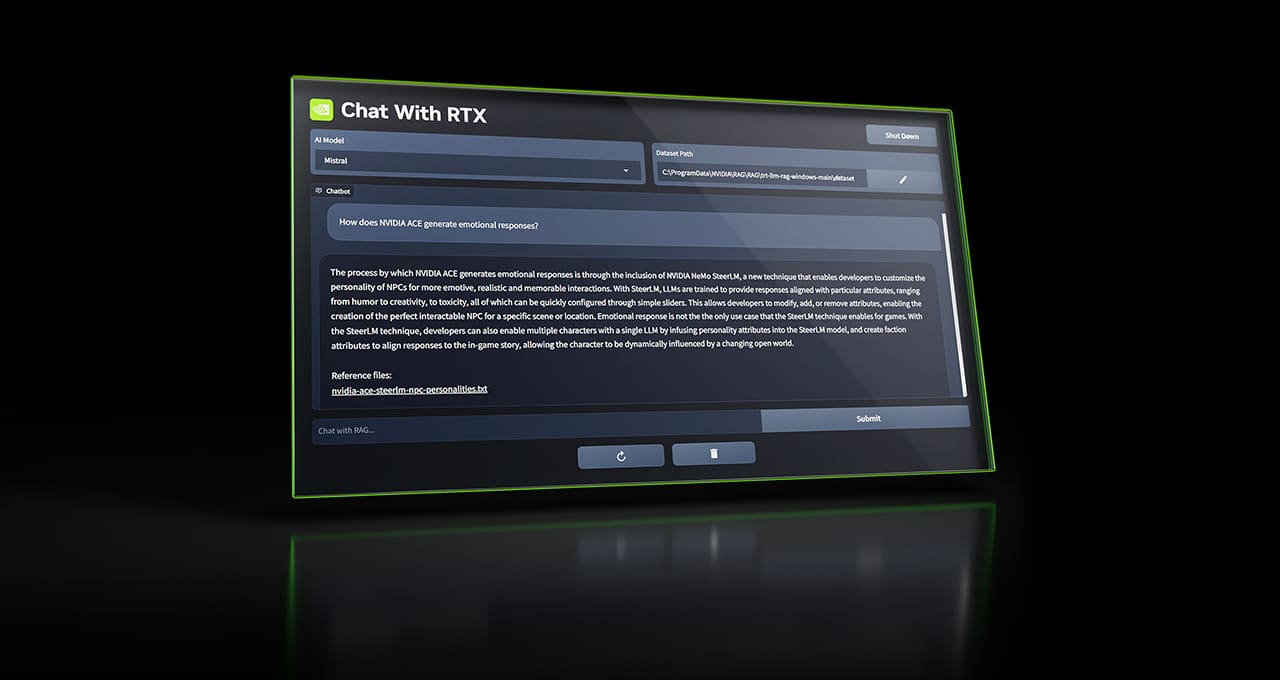

Chat with RTX makes use of retrieval-augmented era (RAG), NVIDIA TensorRT-LLM software program and NVIDIA RTX acceleration to carry generative AI capabilities to native, GeForce-powered Home windows PCs. Customers can rapidly, simply join native recordsdata on a PC as a dataset to an open-source giant language mannequin like Mistral or Llama 2, enabling queries for fast, contextually related solutions.

Relatively than looking via notes or saved content material, customers can merely sort queries. For instance, one might ask, “What was the restaurant my companion advisable whereas in Las Vegas?” and Chat with RTX will scan native recordsdata the consumer factors it to and supply the reply with context.

The instrument helps varied file codecs, together with .txt, .pdf, .doc/.docx and .xml. Level the applying on the folder containing these recordsdata, and the instrument will load them into its library in simply seconds.

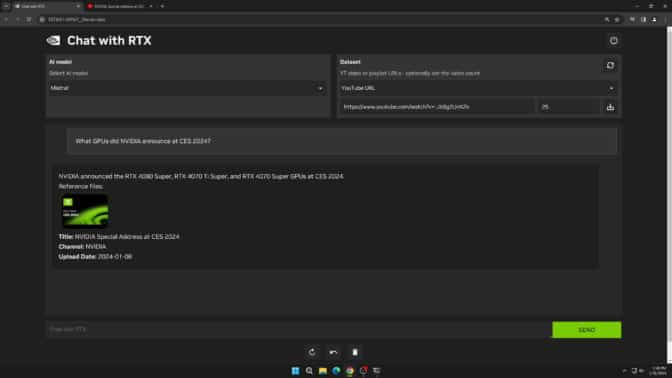

Customers may also embody data from YouTube movies and playlists. Including a video URL to Chat with RTX permits customers to combine this information into their chatbot for contextual queries. For instance, ask for journey suggestions primarily based on content material from favourite influencer movies, or get fast tutorials and how-tos primarily based on high academic sources.

Since Chat with RTX runs domestically on Home windows RTX PCs and workstations, the offered outcomes are quick — and the consumer’s knowledge stays on the gadget. Relatively than counting on cloud-based LLM providers, Chat with RTX lets customers course of delicate knowledge on a neighborhood PC with out the necessity to share it with a 3rd celebration or have an web connection.

Along with a GeForce RTX 30 Collection GPU or increased with a minimal 8GB of VRAM, Chat with RTX requires Home windows 10 or 11, and the newest NVIDIA GPU drivers.

Develop LLM-Primarily based Functions With RTX

Chat with RTX exhibits the potential of accelerating LLMs with RTX GPUs. The app is constructed from the TensorRT-LLM RAG developer reference challenge, obtainable on GitHub. Builders can use the reference challenge to develop and deploy their very own RAG-based functions for RTX, accelerated by TensorRT-LLM. Study extra about constructing LLM-based functions.

Enter a generative AI-powered Home windows app or plug-in to the NVIDIA Generative AI on NVIDIA RTX developer contest, operating via Friday, Feb. 23, for an opportunity to win prizes akin to a GeForce RTX 4090 GPU, a full, in-person convention go to NVIDIA GTC and extra.

Study extra about Chat with RTX.